Cachy: How we made our notebooks 60x faster.

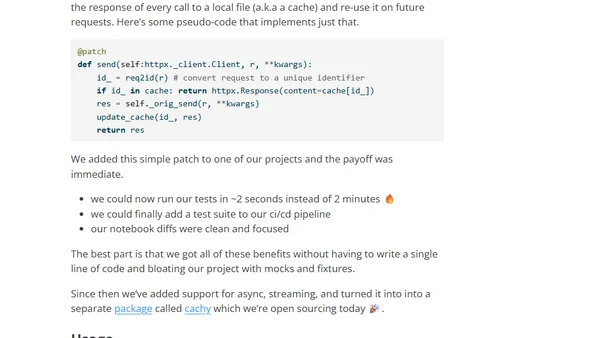

Read OriginalThe article details how AnswerAI created Cachy, a Python package that patches the httpx library to automatically cache responses from LLM providers like OpenAI and Anthropic. This eliminates slow, non-deterministic LLM calls in tests and development, making notebooks 60x faster, enabling CI/CD integration, and producing cleaner code diffs without manual mocking.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet