Autoresearching Apple's "LLM in a Flash" to run Qwen 397B locally

Explores using Apple's 'LLM in a Flash' research to run a massive 397B parameter AI model locally on a MacBook by streaming weights from SSD.

Explores using Apple's 'LLM in a Flash' research to run a massive 397B parameter AI model locally on a MacBook by streaming weights from SSD.

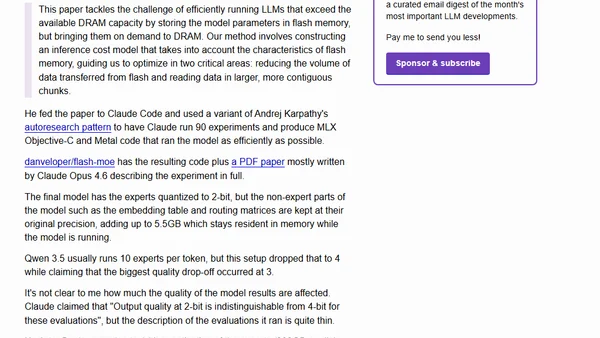

Exploring the UMAP-MLX project, which achieves up to 46x speedups for UMAP using Apple's MLX, with performance benchmarks.

A guide to parakeet-mlx, a project porting NVIDIA's Parakeet ASR model to Apple's MLX framework for fast, local audio transcription.