Fine-tune Falcon 180B with DeepSpeed ZeRO, LoRA and Flash Attention

A technical guide on fine-tuning the massive Falcon 180B language model using DeepSpeed ZeRO, LoRA, and Flash Attention for efficient training.

A technical guide on fine-tuning the massive Falcon 180B language model using DeepSpeed ZeRO, LoRA, and Flash Attention for efficient training.

An introduction to Semantic Kernel's Planner, a tool for automatically generating and executing complex AI tasks using plugins and natural language goals.

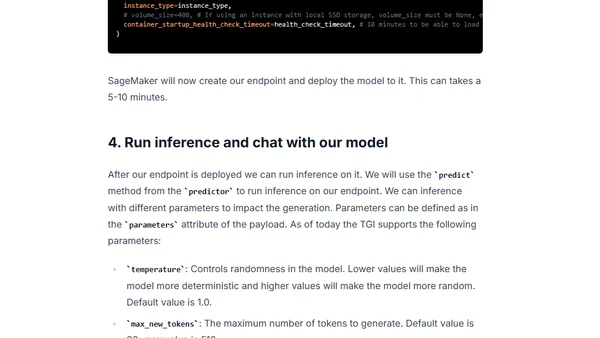

Guide to deploying open-source LLMs like BLOOM and Open Assistant to Amazon SageMaker using Hugging Face's new LLM Inference Container.

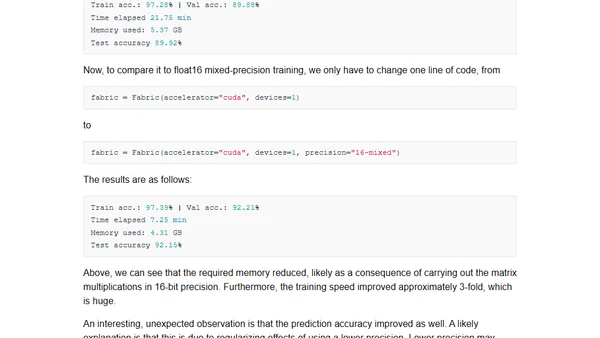

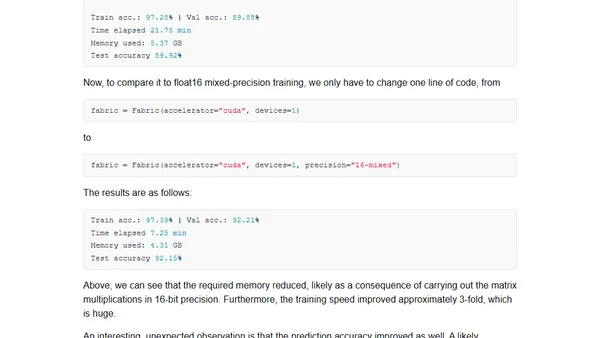

Explores how mixed-precision training techniques can speed up large language model training and inference by up to 3x, reducing memory use.

Exploring mixed-precision techniques to speed up large language model training and inference by up to 3x without losing accuracy.

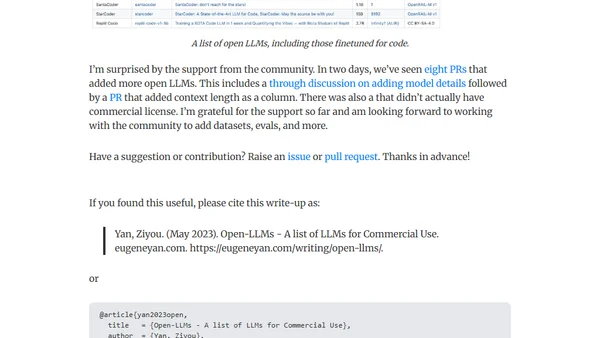

A curated list of open-source Large Language Models (LLMs) available for commercial use, including community-contributed updates and details.

A technical tutorial on fine-tuning a 20B+ parameter LLM using PyTorch FSDP and Hugging Face on Amazon SageMaker's multi-GPU infrastructure.

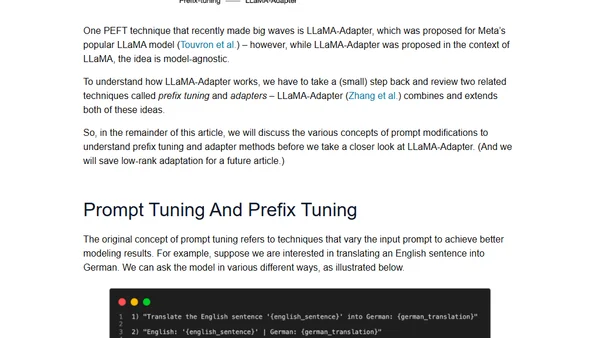

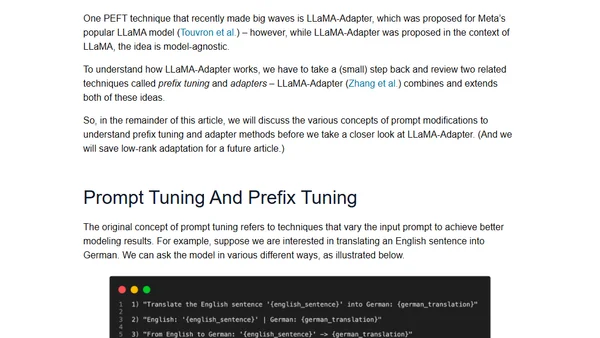

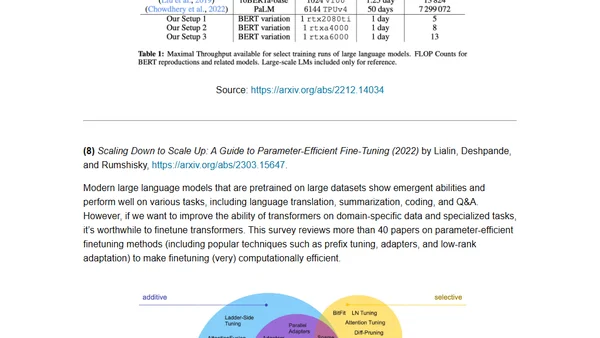

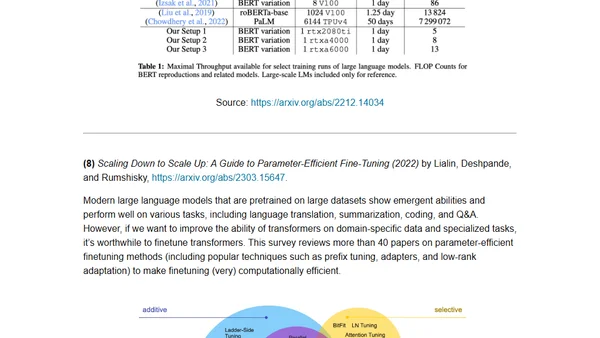

A guide to parameter-efficient finetuning methods for large language models, covering techniques like prefix tuning and LLaMA-Adapters.

Explains parameter-efficient finetuning methods for large language models, covering techniques like prefix tuning and LLaMA-Adapters.

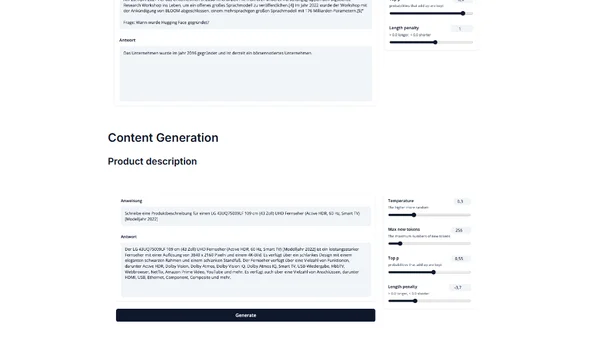

Introduces IGEL, an instruction-tuned German large language model based on BLOOM, for NLP tasks like translation and QA.

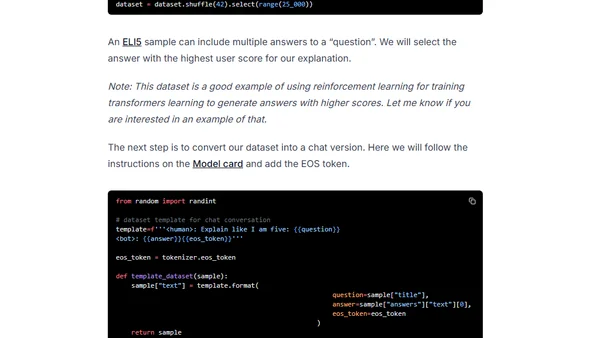

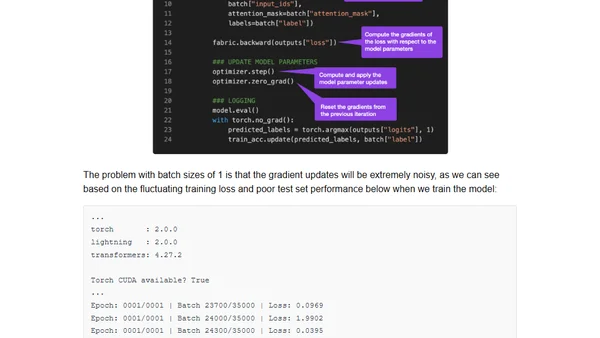

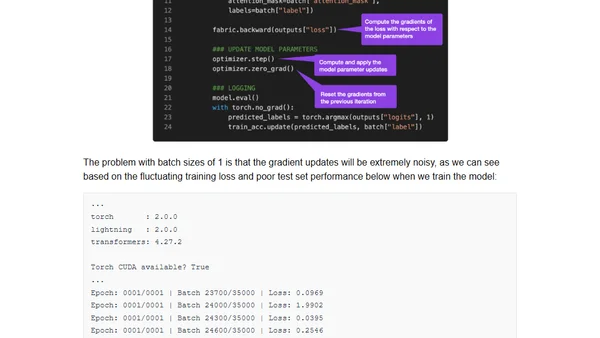

Guide to finetuning large language models on a single GPU using gradient accumulation to overcome memory limitations.

A guide to finetuning large language models like BLOOM on a single GPU using gradient accumulation to overcome memory limits.

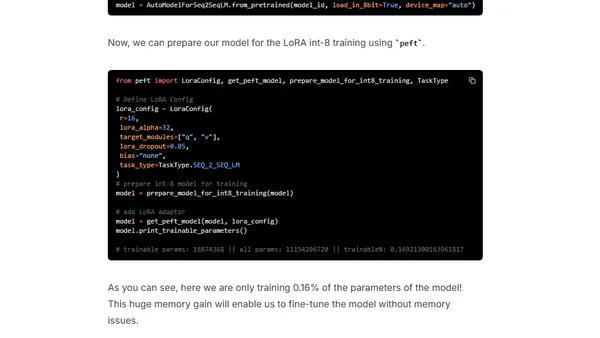

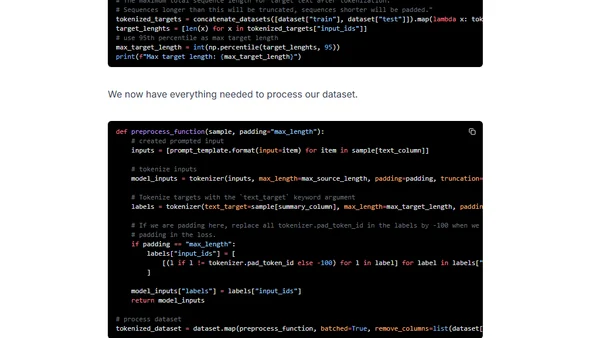

A technical guide on fine-tuning the large FLAN-T5 XXL model efficiently using LoRA and Hugging Face libraries on a single GPU.

Guide to fine-tuning the large FLAN-T5 XXL model using Amazon SageMaker managed training and DeepSpeed for optimization.

Argues against the 'lossy compression' analogy for LLMs like ChatGPT, proposing instead that they are simulators creating temporary simulacra.

A curated reading list of key academic papers for understanding the development and architecture of large language models and transformers.

A curated reading list of key academic papers for understanding the development and architecture of large language models and transformers.

Discusses the limitations of AI chatbots like ChatGPT in providing accurate technical answers and proposes curated resources and expert knowledge as future solutions.

Analyzes the limitations of AI chatbots like ChatGPT in providing accurate technical answers and discusses the need for curated data and human experts.

Learn to optimize GPT-J inference using DeepSpeed-Inference and Hugging Face Transformers for faster GPU performance.