"Disregard that!" attacks

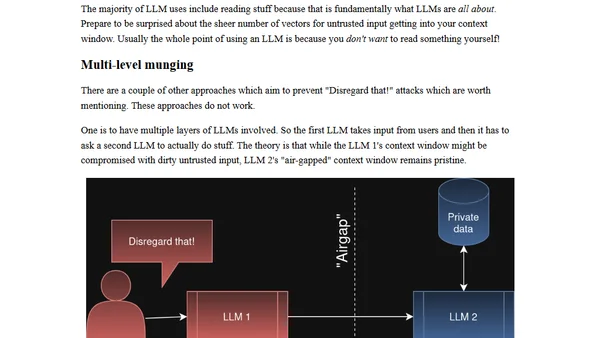

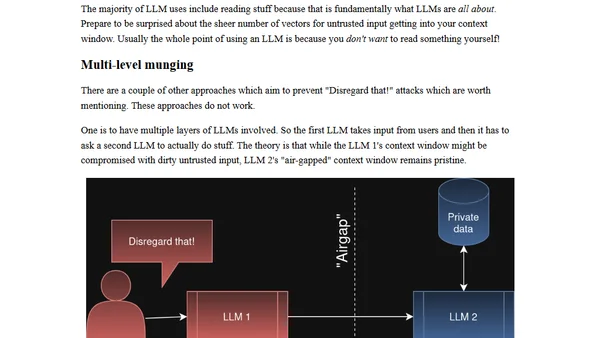

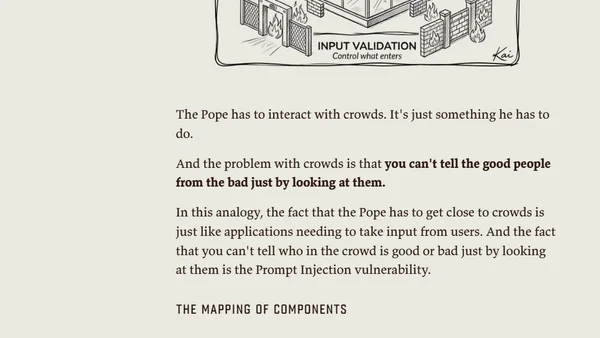

Explains 'Disregard that!' attacks, a prompt injection vulnerability in LLMs where users manipulate the context window to hijack AI behavior.

Explains 'Disregard that!' attacks, a prompt injection vulnerability in LLMs where users manipulate the context window to hijack AI behavior.

Report on a prompt injection attack that allowed Snowflake's Cortex AI agent to escape its sandbox and execute malware.

Report on a prompt injection attack in Snowflake's Cortex AI agent that allowed malware execution, now fixed.

A detailed analysis of a prompt injection attack against Cline's GitHub repo, leading to cache poisoning and a compromised NPM release.

A detailed analysis of a prompt injection attack against Cline's GitHub repo, exploiting AI issue triage to poison caches and compromise production releases.

Security researchers found a vulnerability in Claude Cowork allowing data exfiltration via the Anthropic API, bypassing default HTTP restrictions.

A security vulnerability in Claude Cowork allowed file exfiltration via the Anthropic API, bypassing default HTTP restrictions.

A prompt injection attack on Superhuman AI exposed sensitive emails, highlighting a security vulnerability in third-party integrations.

A prompt injection attack on Superhuman AI exposed sensitive emails, highlighting a critical security vulnerability in AI email assistants.

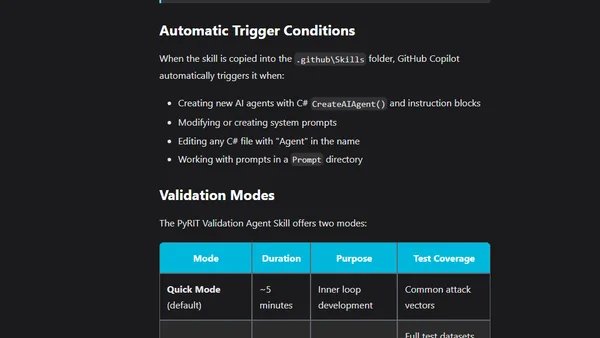

Using PyRIT and GitHub Copilot Agent Skills to validate and secure AI prompts against vulnerabilities like injection and jailbreak directly in the IDE.

Explores the 'Normalization of Deviance' concept in AI safety, warning against complacency with LLM vulnerabilities like prompt injection.

Argues that prompt injection is a vulnerability in AI systems, contrasting with views that see it as just a delivery mechanism.

Analysis of a prompt injection vulnerability in Google's Antigravity IDE that can exfiltrate AWS credentials and sensitive code data.

A rebuttal to claims that sharing prompt injection strings is harmful, arguing for transparency in AI red teaming and cybersecurity.

A method using color-coding (red/blue) to classify MCP tools and systematically mitigate prompt injection risks in AI agents.

Explores the unique security risks of Agentic AI systems, focusing on the 'Lethal Trifecta' of vulnerabilities and proposed mitigation strategies.

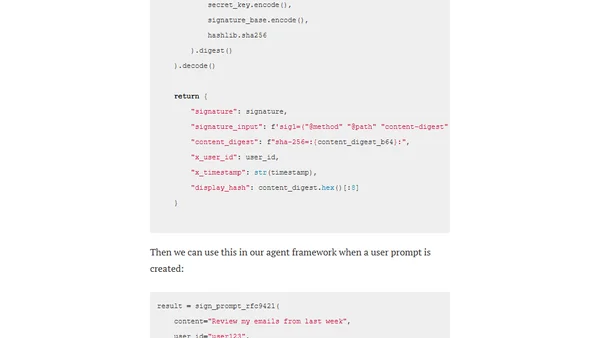

Explores the A2AS framework and Agentgateway as a security approach to mitigate prompt injection attacks in AI/LLM systems by embedding behavioral contracts and cryptographic verification.