LLM Security Automation Isn’t a Drop-In Scanner Yet

Analysis of structural failure modes when using LLMs as security scanners in agentic workflows, with measurement ideas and evidence.

Analysis of structural failure modes when using LLMs as security scanners in agentic workflows, with measurement ideas and evidence.

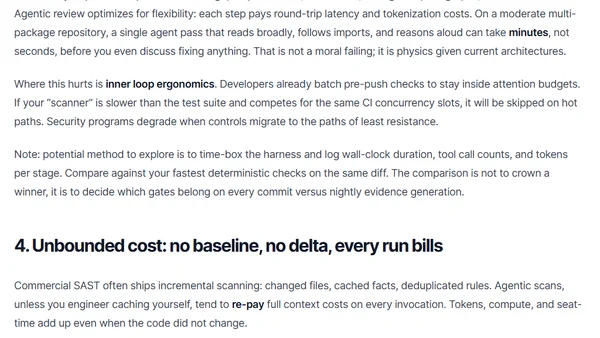

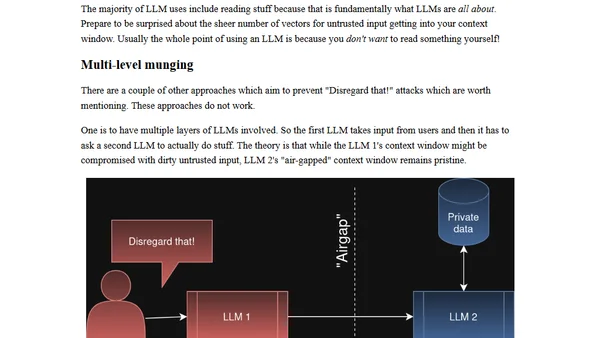

Explains 'Disregard that!' attacks, a prompt injection vulnerability in LLMs where users manipulate the context window to hijack AI behavior.

Argues that prompt injection is a vulnerability in AI systems, contrasting with views that see it as just a delivery mechanism.

A rebuttal to claims that sharing prompt injection strings is harmful, arguing for transparency in AI red teaming and cybersecurity.

Explores how LLMs could enable malware to find personal secrets for blackmail, moving beyond simple ransomware attacks.

Martin Fowler's blog fragments on LLM browser security, AI-assisted coding debates, and the literary significance of the Doonesbury comic strip.

Explores the unique security risks of Agentic AI systems, focusing on the 'Lethal Trifecta' of vulnerabilities and proposed mitigation strategies.

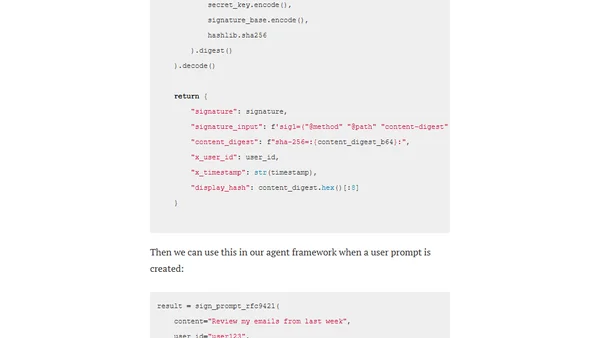

Explores the A2AS framework and Agentgateway as a security approach to mitigate prompt injection attacks in AI/LLM systems by embedding behavioral contracts and cryptographic verification.

Explores strategies and Azure OpenAI features to mitigate inappropriate use and enhance safety in AI chatbot implementations.