DeepSeek’s Multi-Head Latent Attention

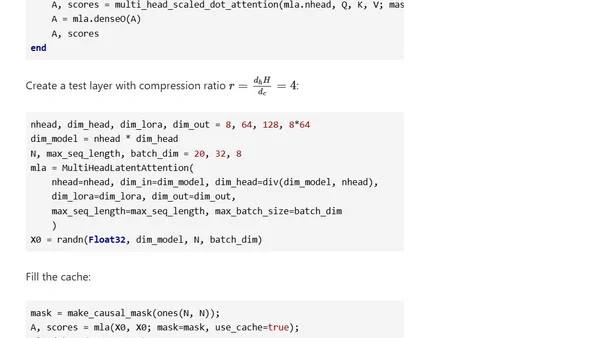

Read OriginalThis article provides a detailed analysis of DeepSeek's Multi-Head Latent Attention (MLA), a key innovation in their V3 and R1 models. It explains how MLA combines attention, KV caching, and LoRA to compress vectors, reducing inference cache size. The post includes the mathematical foundations, implementation details in Julia using Flux.jl, and discusses potential performance trade-offs and enhancements like weight absorption and decoupled RoPE.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet