Introduction to Data Engineering Concepts | Data Modeling Basics

An introduction to data modeling concepts, covering OLTP vs OLAP systems, normalization, and common schema designs for data engineering.

An introduction to data modeling concepts, covering OLTP vs OLAP systems, normalization, and common schema designs for data engineering.

Explains streaming data fundamentals, how streaming systems work, their use cases, and challenges compared to batch processing.

Explains batch processing fundamentals for data engineering, covering concepts, tools, and its ongoing relevance in data workflows.

Explains core data engineering concepts, comparing ETL and ELT data pipeline strategies and their use cases.

An introduction to data engineering concepts, focusing on data sources and ingestion strategies like batch vs. streaming.

An introductory guide to data engineering, explaining its role, key concepts, and how it differs from data science in the modern data ecosystem.

A monthly roundup of curated links and articles on data engineering, Kafka, CDC, stream processing, and AI/ML topics.

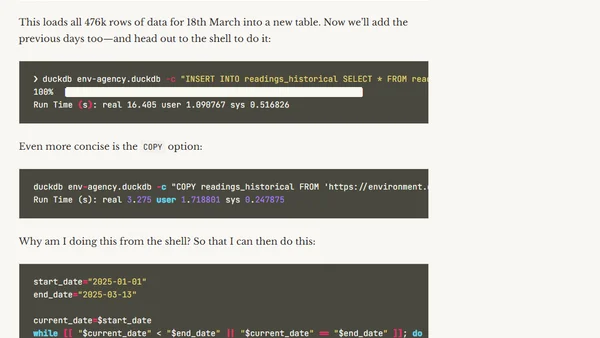

A guide to building a data pipeline using DuckDB, covering data ingestion, transformation, and analytics with real-world environmental data.

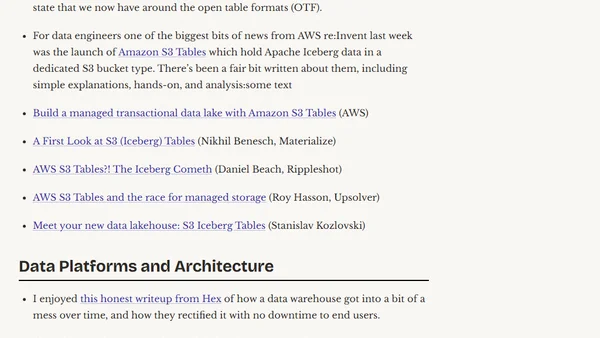

A monthly roundup of interesting links and articles about data engineering, databases, streaming tech, and data infrastructure.

A comprehensive 2025 guide to Apache Iceberg, covering its architecture, ecosystem, and practical use for data lakehouse management.

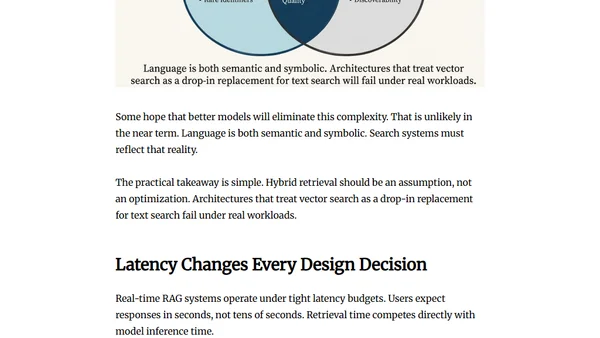

Argues that RAG system failures stem from data engineering issues like fragmented data and governance, not from model or vector database choices.

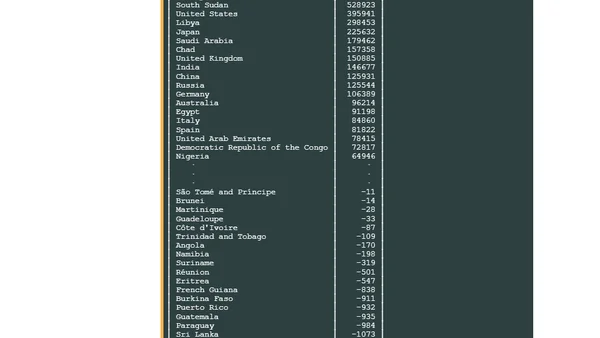

Overview of Overture Maps Foundation's updated global, open geospatial datasets, their partners, and data refresh strategy.

Monthly roundup of news and resources in data streaming, stream processing, and the Apache Kafka ecosystem, curated by industry experts.

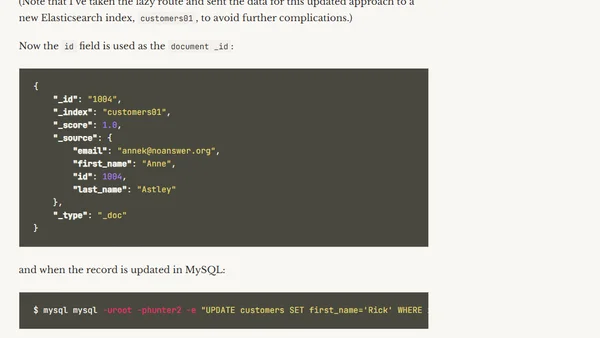

An overview of Apache Flink CDC, its declarative pipeline feature, and how it simplifies data integration from databases like MySQL to sinks like Elasticsearch.

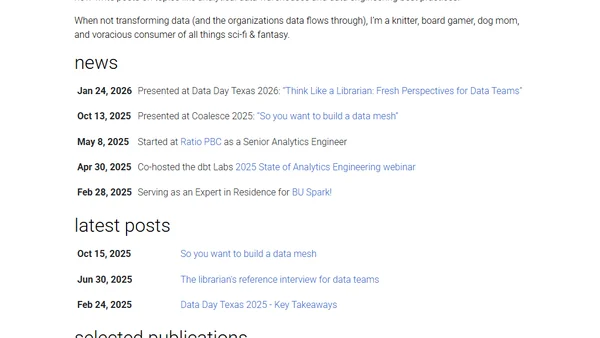

A profile of a Senior Analytics Engineer specializing in dbt, data mesh architecture, and applying library science principles to modern data teams.

Monthly roundup of news and developments in data streaming, stream processing, and the data ecosystem, featuring Apache Flink, Kafka, and open-source tools.

An introduction to Apache Parquet, a columnar storage file format for efficient data processing and analytics.

Explains how Parquet handles schema evolution, including adding/removing columns and changing data types, for data engineers.

Explains the hierarchical structure of Parquet files, detailing how pages, row groups, and columns optimize storage and query performance.

A practical guide to reading and writing Parquet files in Python using PyArrow and FastParquet libraries.