Schema Evolution Without Breaking Consumers

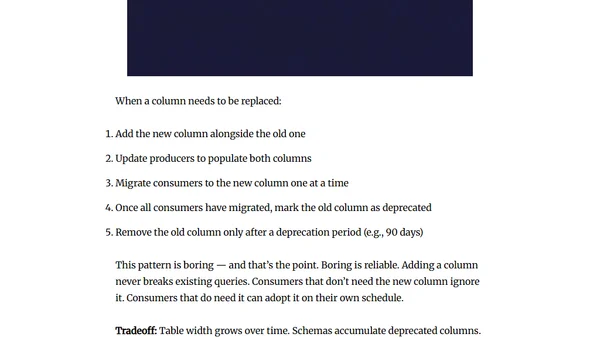

Explains how to safely evolve data schemas using API-like discipline to prevent breaking downstream systems like dashboards and ML pipelines.

Explains how to safely evolve data schemas using API-like discipline to prevent breaking downstream systems like dashboards and ML pipelines.

Explains how Parquet handles schema evolution, including adding/removing columns and changing data types, for data engineers.

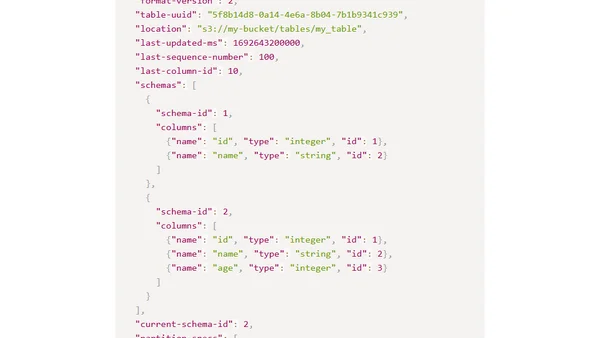

Explains the critical role and structure of the metadata.json file in Apache Iceberg, the open-source table format for data lakehouses.

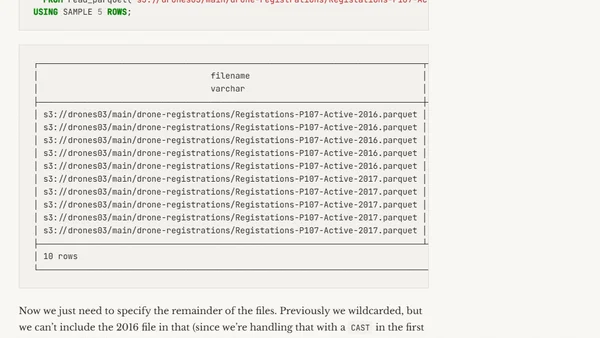

How to handle mismatched Parquet file schemas when querying multiple files in DuckDB using the UNION_BY_NAME option.

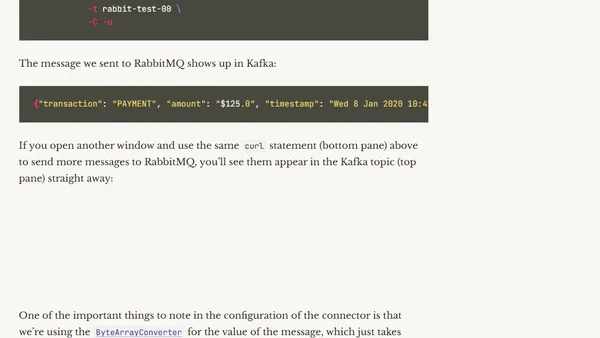

A technical guide on integrating RabbitMQ with Kafka using Kafka Connect, including setup, schema handling, and use cases.

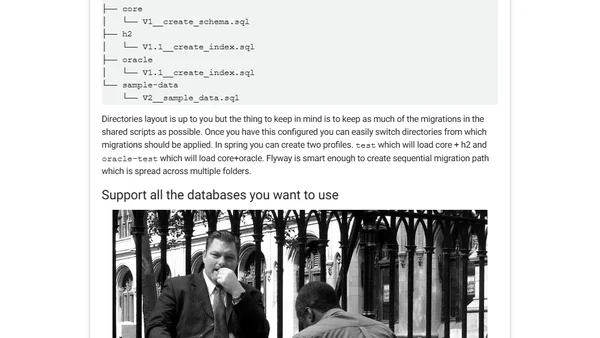

A guide to best practices for database schema migrations, focusing on tools like Flyway and Hibernate for evolving applications.