Assembling the Apache Lakehouse: The Modular Architecture

Explains the modular Apache Lakehouse architecture using open-source components like Parquet, Iceberg, Polaris, and Arrow for vendor-neutral data management.

Explains the modular Apache Lakehouse architecture using open-source components like Parquet, Iceberg, Polaris, and Arrow for vendor-neutral data management.

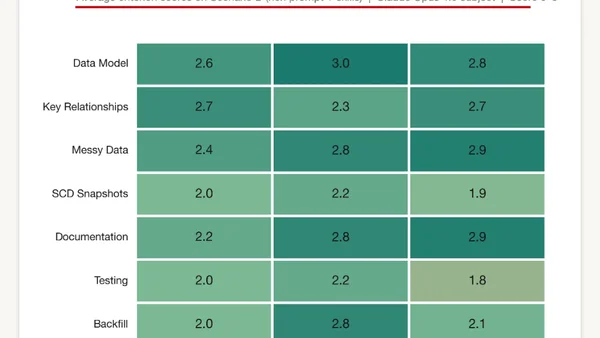

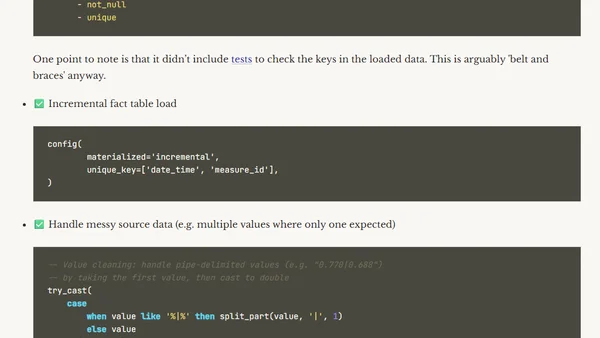

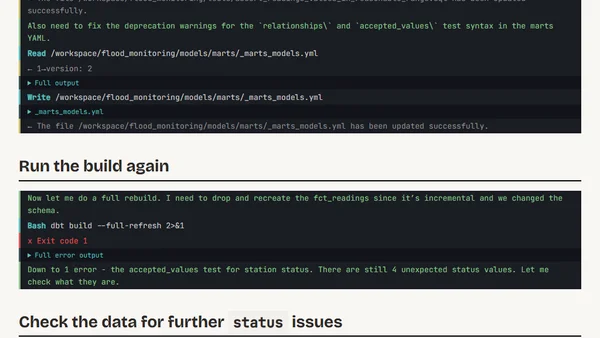

Testing Claude Code's ability to build a production-ready dbt project for a data pipeline, evaluating prompts and skills.

Explores the current capabilities and limitations of using Claude Code (AI) to build a dbt project, arguing it won't replace data engineers yet.

A technical demonstration of using Claude Code AI to autonomously debug and adapt dbt data models by analyzing data anomalies.

A guide to integrating Dremio's data platform with JetBrains AI Assistant for enhanced data querying, pipeline generation, and app development within JetBrains IDEs.

Monthly job board for database professionals, featuring remote and onsite data engineering, DBA, and analytics roles from March 2026.

A monthly roundup of tech links focusing on data engineering, Kafka, AI, and software development, including personal articles and industry news.

Argues that data quality must be enforced at the pipeline's ingestion point, not patched in dashboards, to ensure consistent, reliable data.

A guide to designing reliable, fault-tolerant data pipelines with architectural principles like idempotency, observability, and DAG-based workflows.

A guide to the core principles and systems thinking required for data engineering, beyond just learning specific tools.

Explains idempotent data pipelines, patterns like partition overwrite and MERGE, and how to prevent duplicate data during retries.

Explains how to safely evolve data schemas using API-like discipline to prevent breaking downstream systems like dashboards and ML pipelines.

A guide to choosing between batch and streaming data processing models based on actual freshness requirements and cost.

Explains the importance of automated testing for data pipelines, covering schema validation, data quality checks, and regression testing.

Seven critical mistakes that can derail semantic layer projects in data engineering, with practical advice on how to avoid them.

Seven common data modeling mistakes that cause reporting errors and slow analytics, with practical solutions to avoid them.

A practical, tool-agnostic checklist of essential best practices for designing, building, and maintaining reliable data engineering pipelines.

Explains the importance of pipeline observability for data health, covering metrics, logs, and lineage to detect issues beyond simple execution monitoring.

Explores the limitations of traditional pull queries in data systems and advocates for using materialized views and data duplication to improve performance.

A comprehensive guide to learning Apache Iceberg, data lakehouse architecture, and Agentic AI with curated tutorials, tools, and resources.