All About Parquet Part 05 - Compression Techniques in Parquet

Explores compression algorithms in Parquet files, comparing Snappy, Gzip, Brotli, Zstandard, and LZO for storage and performance.

Explores compression algorithms in Parquet files, comparing Snappy, Gzip, Brotli, Zstandard, and LZO for storage and performance.

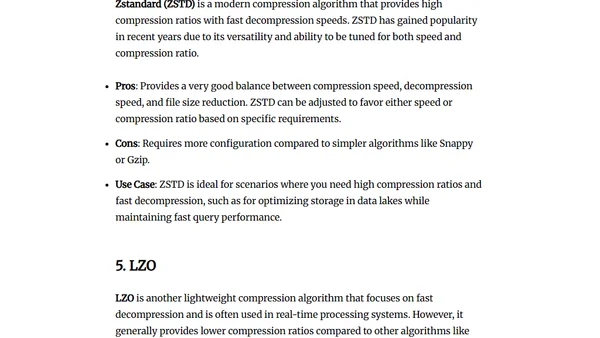

A technical guide on processing Overture Maps' global land cover dataset, focusing on extracting and analyzing Australia's data using DuckDB and QGIS.

A tutorial on using PyArrow for data analytics in Python, covering core concepts, file I/O, and analytical operations.

Exploring Japan's building footprint data from the Flateau project, which converts 3D CityGML data into 2D Parquet files for analysis.

A benchmark analysis of DuckDB's performance on a massive 1.1 billion row NYC taxi dataset, comparing it to other database technologies.

A no-code tutorial on converting XLS/CSV files to Parquet format using Dremio, including setup via Docker.

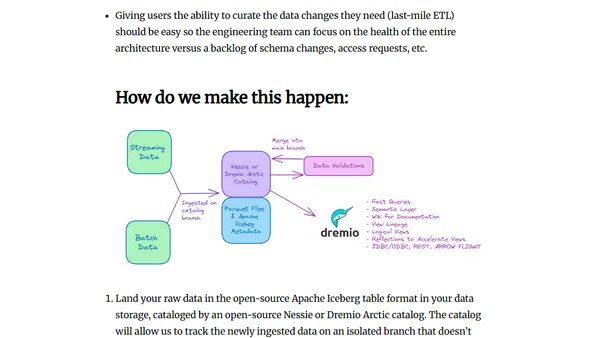

A guide to building a cost-effective, high-performance, and self-service data lakehouse architecture, addressing common pitfalls and outlining key principles.

A guide comparing popular data compression codecs (zstd, brotli, lz4, gzip, snappy) for Parquet files, explaining their trade-offs for big data.

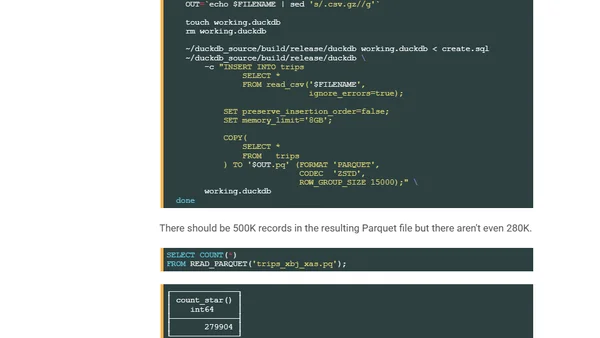

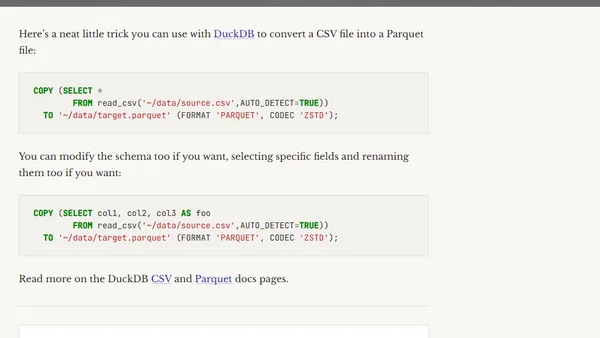

A quick guide on using DuckDB's SQL commands to efficiently convert CSV files to the Parquet format, including schema modifications.

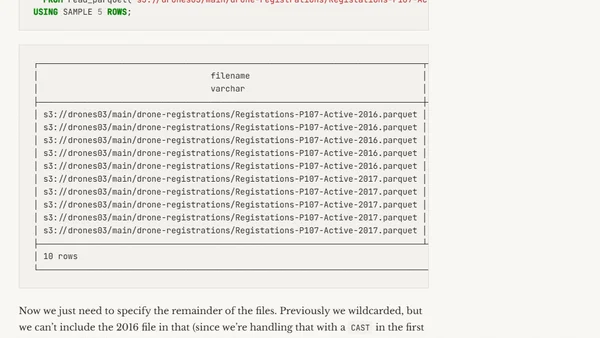

How to handle mismatched Parquet file schemas when querying multiple files in DuckDB using the UNION_BY_NAME option.

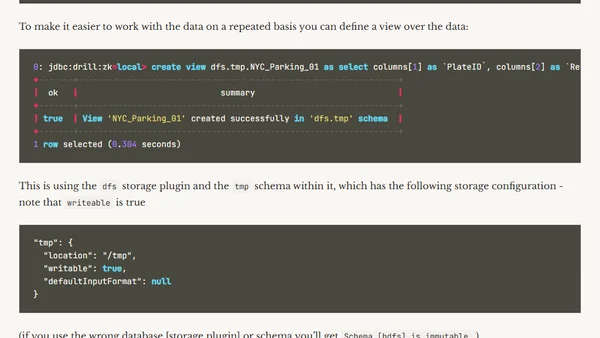

Introduction to Apache Drill, a SQL engine for querying diverse data sources like files (CSV, JSON) and databases.