Static Quantization with Hugging Face `optimum` for ~3x latency improvements

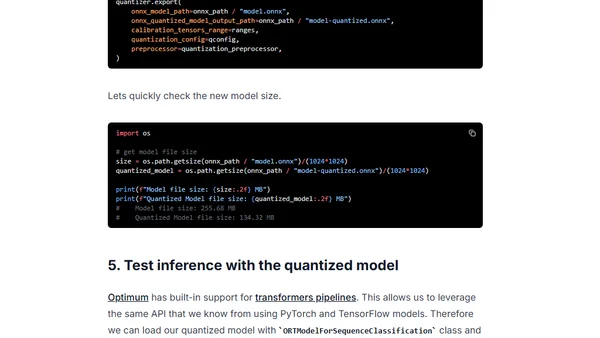

Read OriginalThis technical tutorial demonstrates post-training static quantization on a Hugging Face Transformers model using the Optimum library and ONNX Runtime. It provides a step-by-step guide to quantize a DistilBERT model for CPU inference, covering conversion to ONNX, calibration, quantization, performance evaluation, and sharing the model on the Hugging Face Hub, resulting in significant latency gains with minimal accuracy loss.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet