We gotta talk about AI as a programming tool for the arts

A theater software CEO shares his journey from AI skepticism to using Claude Code to build a niche lighting app, discussing AI's impact on programming.

SimonWillison.net is the long-running blog of Simon Willison, a software engineer, open-source creator, and co-author of the original Django framework. He writes about Python, Django, Datasette, AI tooling, prompt engineering, search, databases, APIs, data journalism, and practical software architecture. The blog includes detailed notes from experiments, conference talks, and real projects. Readers will find clear explanations of topics such as LLM workflows, SQL patterns, data publishing, scraping, deployment, caching, and modern developer tooling. Simon also publishes frequent micro-posts and TIL entries that document small discoveries and tricks from day-to-day engineering work. The tone is practical and research oriented, making the site a valuable resource for anyone interested in serious engineering and open data.

287 articles from this blog

A theater software CEO shares his journey from AI skepticism to using Claude Code to build a niche lighting app, discussing AI's impact on programming.

Datasette 1.0a24 release adds file upload support, a new dev environment using uv, and plugin hook enhancements.

A developer explains how to add dynamic features like admin edit links and random tag navigation to a statically cached Django blog using localStorage and JavaScript.

A five-level model for AI-assisted programming, from basic autocomplete to fully autonomous 'dark factory' software development.

A developer uses a single AI coding agent to build a basic web browser from scratch in Rust over three days, challenging assumptions about AI-assisted development.

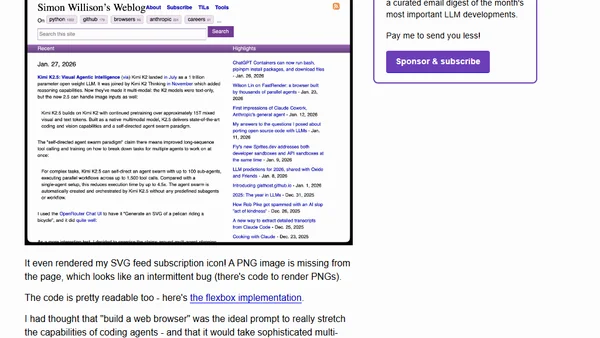

Kimi K2.5 is a new multimodal AI model with visual understanding and a self-directed agent swarm for complex task execution.

Tips for using AI coding agents to generate high-quality Python tests, focusing on leveraging existing test suites and patterns.

ChatGPT's code execution containers now support bash, multiple programming languages, package installation via pip/npm, and file downloads.

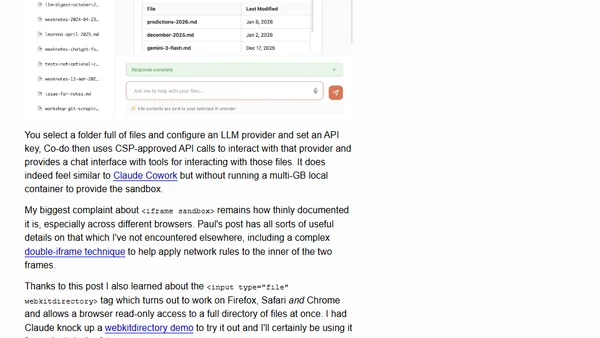

Explores using the web browser as a secure sandbox for AI coding agents, examining APIs for filesystem, network, and safe code execution.

A design lead critiques the traditional design process, advocating for rapid AI-powered prototyping to reduce risk and explore ideas faster.

Explores why non-programmers struggle to see software solutions, contrasting their mindset with the automation-focused perspective of developers.

A developer describes using ChatGPT to research game theory and implementing a token-based conflict system in a simulated town game.

Explains how exe.dev's SSH-based hosting service routes traffic to specific VMs without unique IPs, using public keys for identification.

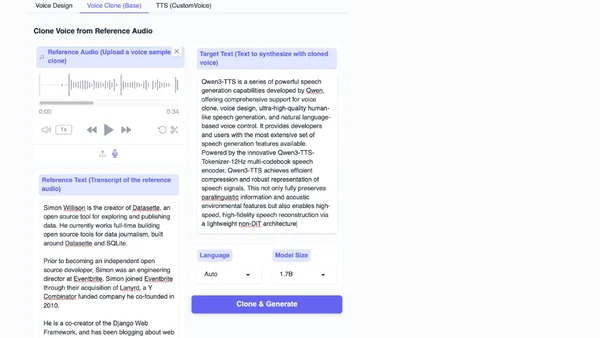

Qwen3-TTS, a family of advanced multilingual text-to-speech models, is now open source, featuring voice cloning and description-based control.

Explains how Claude Code functions as a small game engine with a React-based scene graph pipeline for terminal UI rendering.

Claude Code is described as a small game engine, detailing its React-based scene graph to ANSI rendering pipeline with a 16ms frame budget.

Anthropic publicly released Claude AI's internal 'constitution', a 35k-token document outlining its core values and training principles.

Analysis of the electricity consumption of AI coding agents like Claude Code, comparing it to household appliances based on token usage.

A university professor describes an open-book exam where students could use chatbots, analyzing the low adoption rate and student motivations.

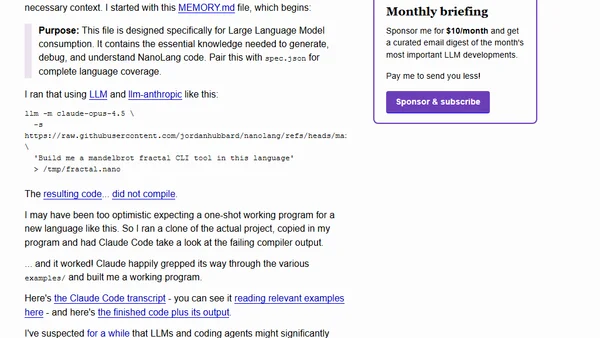

Explores NanoLang, a new programming language designed for LLMs, and tests AI's ability to generate working code in it.