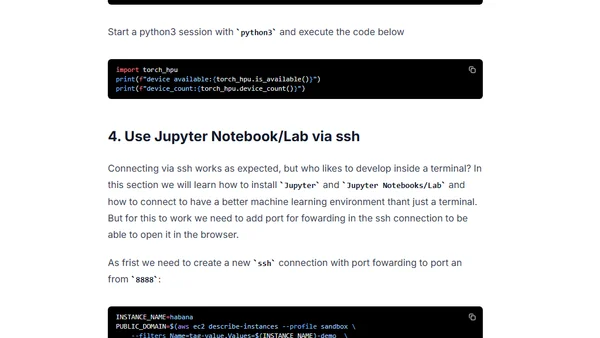

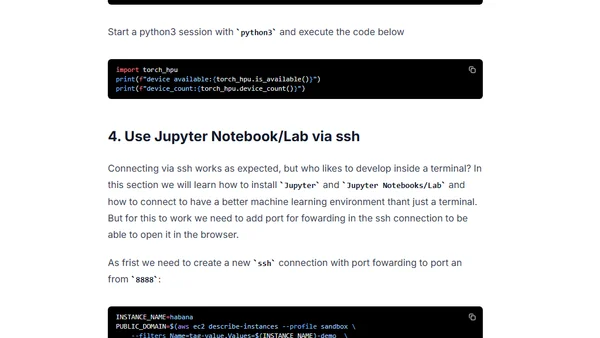

Setup Deep Learning environment for Hugging Face Transformers with Habana Gaudi on AWS

Guide to setting up a deep learning environment on AWS using Habana Gaudi accelerators and Hugging Face libraries for transformer models.

Philipp Schmid is a Staff Engineer at Google DeepMind, building AI Developer Experience and DevRel initiatives. He specializes in LLMs, RLHF, and making advanced AI accessible to developers worldwide.

191 articles from this blog

Guide to setting up a deep learning environment on AWS using Habana Gaudi accelerators and Hugging Face libraries for transformer models.

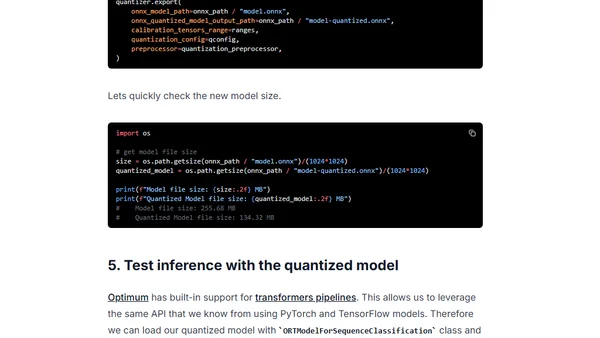

Learn how to use Hugging Face Optimum and ONNX Runtime to apply static quantization to a DistilBERT model, achieving ~3x latency improvements.

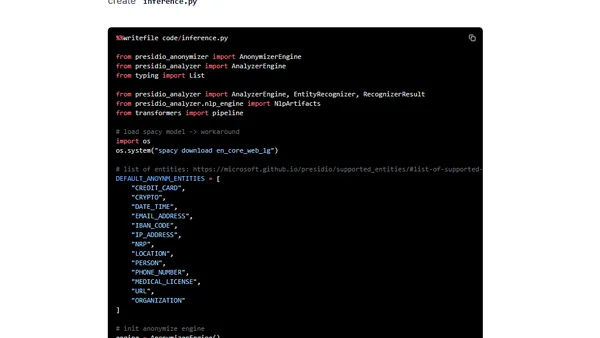

A technical guide on using Hugging Face Transformers and Amazon SageMaker to detect and anonymize Personally Identifiable Information (PII) in text.

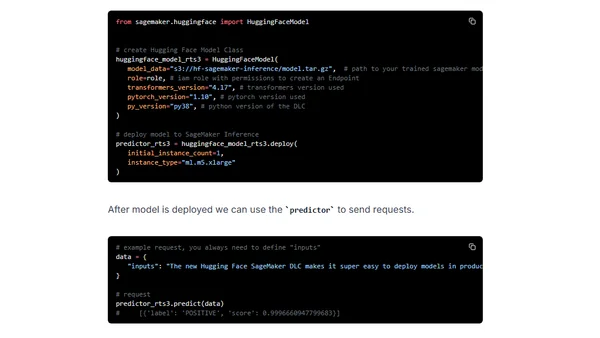

Compares Amazon SageMaker's four inference options for deploying Hugging Face Transformers models, covering latency, use cases, and pricing.

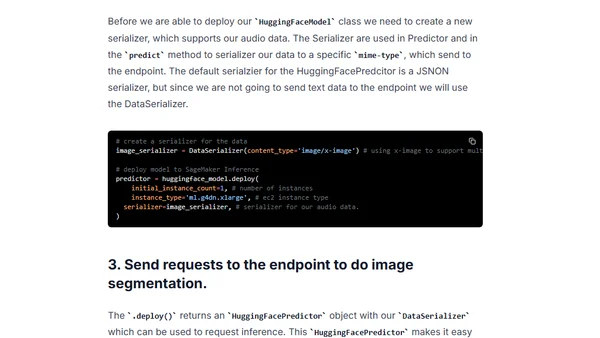

A technical guide on using Hugging Face's SegFormer model with Amazon SageMaker for semantic image segmentation tasks.

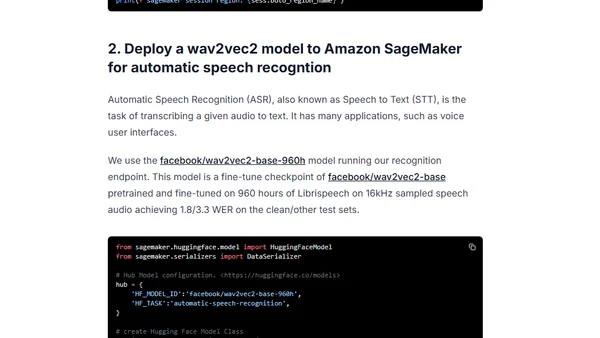

A tutorial on deploying Hugging Face's wav2vec2 model on Amazon SageMaker for automatic speech recognition using the updated SageMaker SDK.

A guide to deploying Hugging Face's DistilBERT model for serverless inference using Amazon SageMaker, including setup and deployment steps.

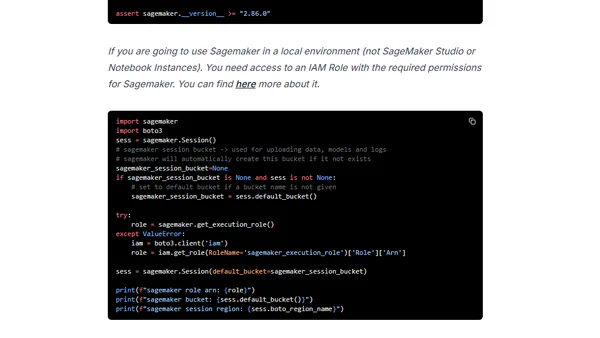

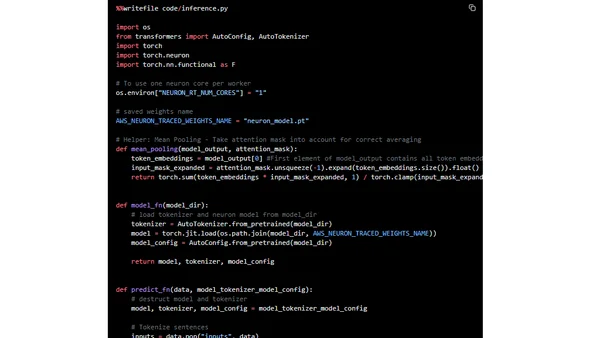

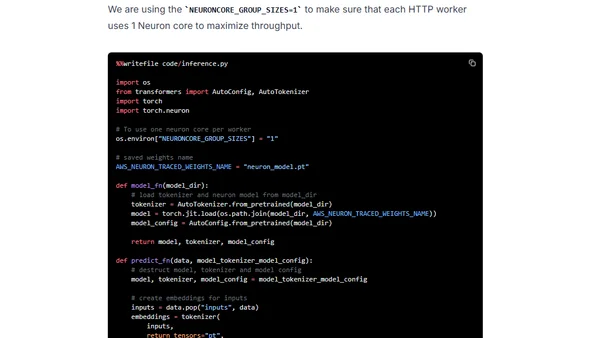

A tutorial on accelerating sentence embeddings using Hugging Face Transformers and AWS Inferentia chips for high-performance semantic search.

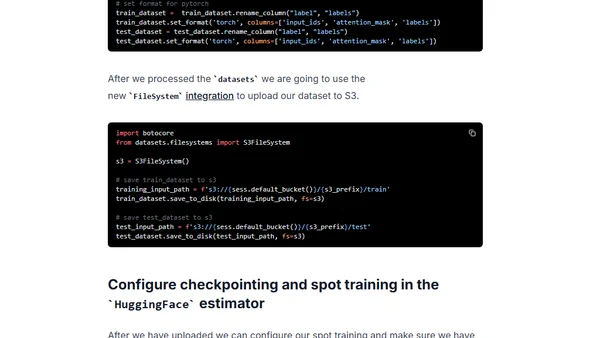

A technical guide on using AWS Spot Instances with Hugging Face Transformers on Amazon SageMaker to reduce machine learning training costs by up to 90%.

A tutorial on accelerating BERT model inference using Hugging Face Transformers and AWS Inferentia chips for cost-effective, high-performance deployment.

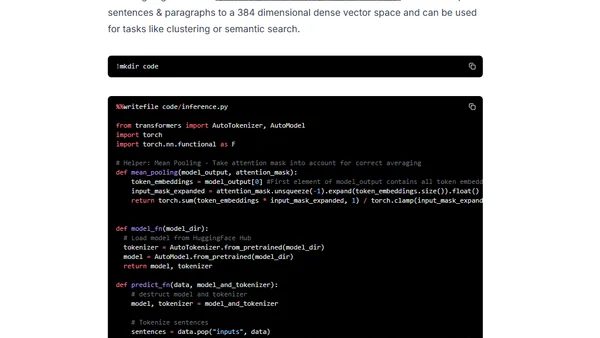

Guide to deploying a Sentence Transformers model on Amazon SageMaker for generating document embeddings using Hugging Face's Inference Toolkit.

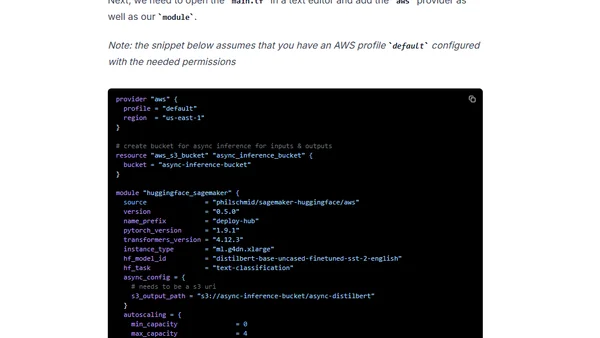

A guide to deploying autoscaling Hugging Face Transformers (like BERT) on Amazon SageMaker using a Terraform module for real-time and asynchronous inference.

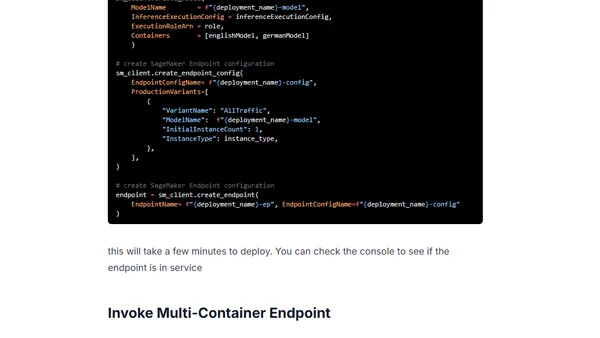

Guide to deploying multiple Hugging Face Transformer models as a cost-optimized Multi-Container Endpoint using Amazon SageMaker.

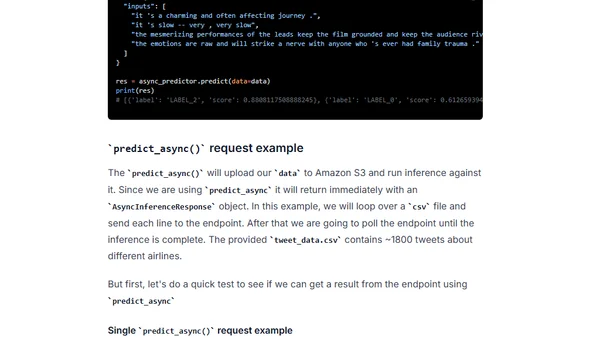

Guide to deploying Hugging Face Transformers models for asynchronous inference using Amazon SageMaker, including setup and configuration.

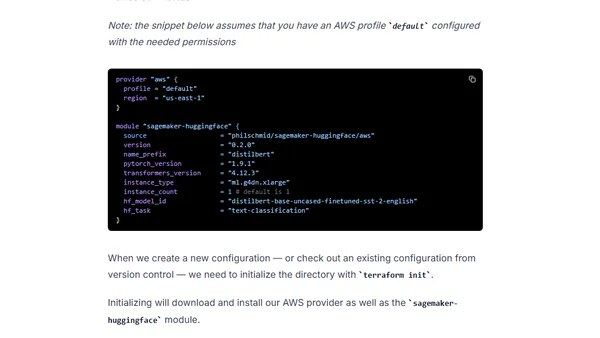

A technical guide on deploying a DistilBERT model to production using Hugging Face Transformers, Amazon SageMaker, and Infrastructure as Code with Terraform.

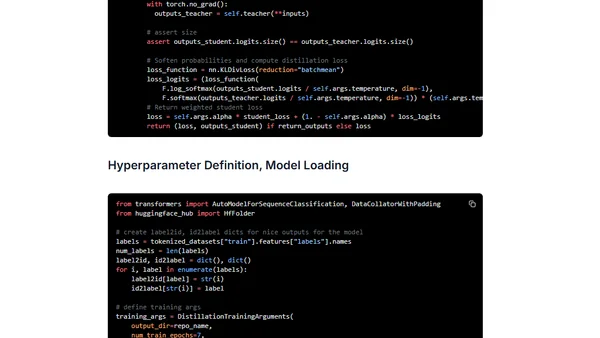

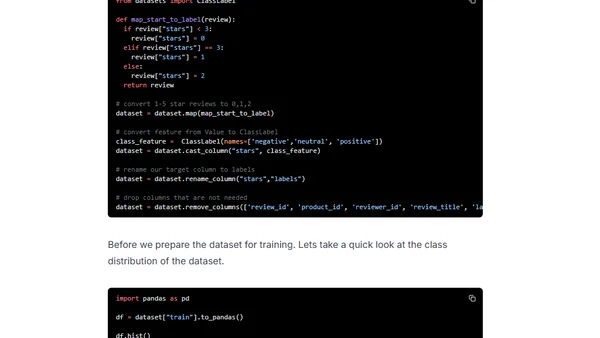

A tutorial on using task-specific knowledge distillation to compress a BERT model for text classification with Transformers and Amazon SageMaker.

A guide to accelerating multilingual BERT fine-tuning using Hugging Face Transformers with distributed training on Amazon SageMaker.

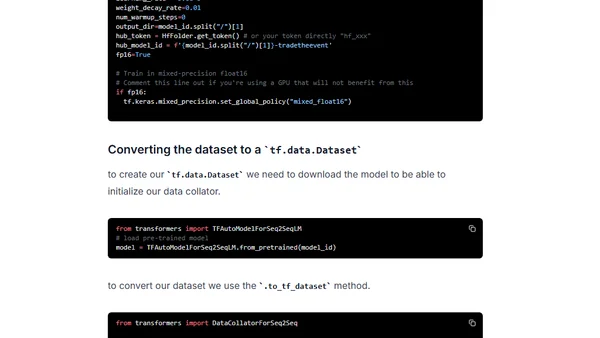

A tutorial on fine-tuning a Hugging Face Transformer model for financial text summarization using Keras and Amazon SageMaker.

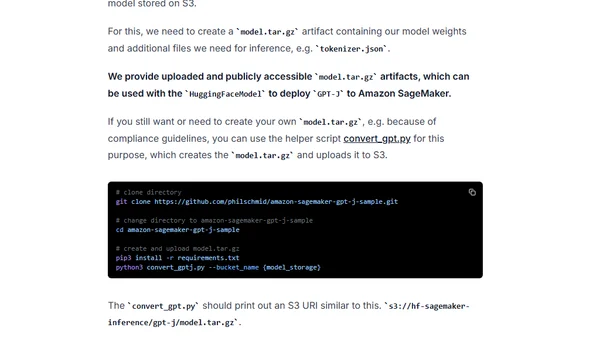

A guide to deploying the GPT-J 6B language model for production inference using Hugging Face Transformers and Amazon SageMaker.

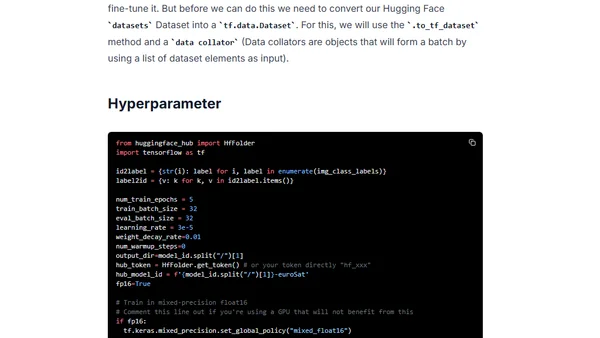

A tutorial on fine-tuning a Vision Transformer (ViT) model for satellite image classification using Hugging Face Transformers and Keras.