Distributed training on multilingual BERT with Hugging Face Transformers and Amazon SageMaker

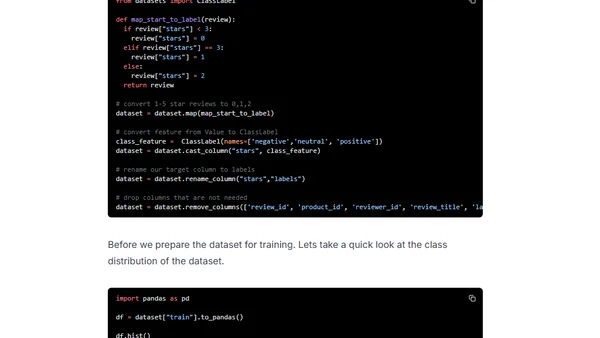

Read OriginalThis technical tutorial demonstrates how to perform distributed training to fine-tune a multilingual BERT model for text classification. It uses the Hugging Face Transformers and Datasets libraries with PyTorch on Amazon SageMaker, specifically leveraging SageMaker's data parallelism to scale training and reduce duration on large datasets.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet