Accelerate BERT inference with DeepSpeed-Inference on GPUs

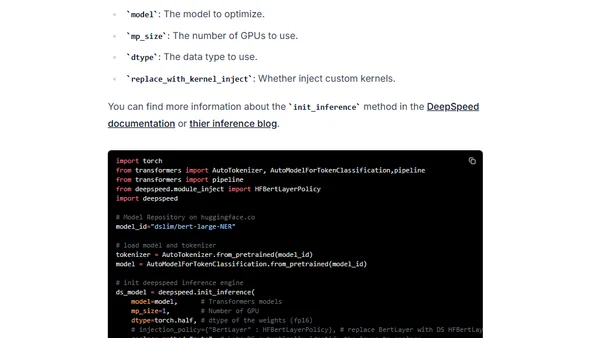

Read OriginalThis technical tutorial demonstrates how to accelerate inference for Hugging Face Transformers models (BERT, RoBERTa) on GPUs using DeepSpeed-Inference. It covers setting up the environment, applying optimization techniques, and evaluating performance gains, specifically showing how to reduce latency for a BERT large model from 30ms to 10ms.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet