Debating the Merits of LLMs

An analysis of the ethical debate around LLMs, contrasting their use in creative fields with their potential for scientific advancement.

An analysis of the ethical debate around LLMs, contrasting their use in creative fields with their potential for scientific advancement.

Explores the critical challenge of bias in health AI data, why unbiased data is impossible, and the ethical implications for medical algorithms.

Argues for a balanced approach to AI, using it for discovery but doing important learning and thinking tasks manually to preserve meaning and growth.

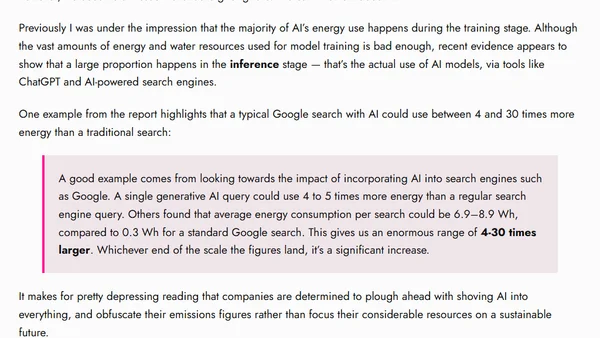

A report reveals the significant environmental impact of AI, especially in inference stages like AI-powered search, using 4-30 times more energy than traditional methods.

Analysis of Mozilla's public AI paper, highlighting the benefits of small, open-source language models for efficiency, privacy, and global access.

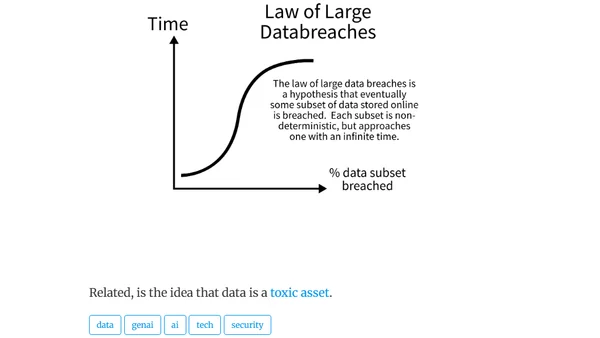

Explores the 'law of large data breaches,' a hypothesis that all online data subsets are eventually breached, relating it to probability and data as a toxic asset.

Explores the need for a modern "Digital Rights of Humans" declaration to protect privacy, data ownership, and freedom from algorithmic harm in the AI era.

Argues for an evolved robots.txt standard with AI-specific rules and regulations to enforce them, citing Perplexity AI's violations.

A personal manifesto advocating for a more human-centric, intentional, and ethical web in response to the rise of AI-generated content.

Argues that the term 'Open Source' is misleading for LLMs and proposes the new term 'PALE LLMs' (Publicly Available, Locally Executable).

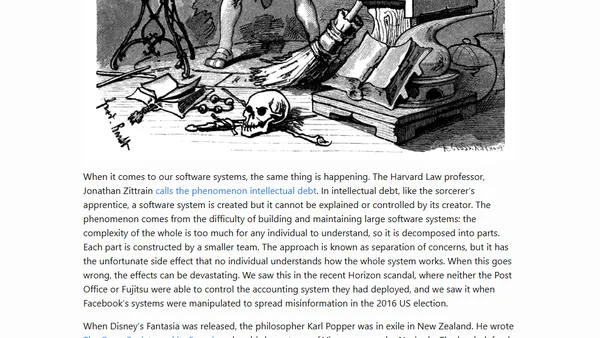

Explores the concept of 'intellectual debt' in AI and software systems, comparing it to The Sorcerer's Apprentice and arguing for open society principles as a solution.

Analyzes IBM's punch card role in the Holocaust to draw parallels with modern AI risks and corporate ethics in technology.

An opinion piece arguing against using AI-generated images, highlighting ethical concerns and the negative impact on professional illustrators' livelihoods.

A developer argues that AI should focus on automating tedious tasks to free up human energy for creative and meaningful work.

An analysis of GitHub Copilot's ethical and legal implications regarding open source licensing, arguing it facilitates the laundering of free software into proprietary code.

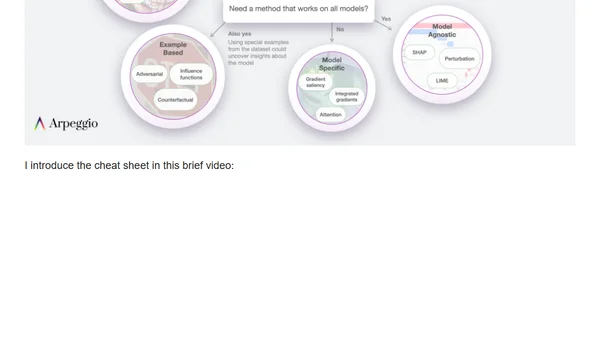

A high-level guide to tools and methods for understanding AI/ML models and their predictions, known as Explainable AI (XAI).

Explores practical processes for building trust and ensuring ethics in AI development, focusing on transparency, bias, and security.

Explores practical aspects of building trust in AI systems, focusing on trust in the development process, results, and the company itself.

Explores the interconnected principles of trust, ethics, transparency, and accountability in the development and deployment of Artificial Intelligence systems.

Analyzes the fallout from Timnit Gebru's firing from Google and debates appropriate community responses in the AI research field.