AI Will Never Be Ethical or Safe

Explores why AI can never be fully ethical or safe due to the fundamental inability to know context and intent.

Explores why AI can never be fully ethical or safe due to the fundamental inability to know context and intent.

Explores legal liability risks when using AI agents to write code, questioning who is accountable for bugs that cause harm or financial loss.

Critique of AI's 'frictionless' promise, arguing human expertise and collaboration remain essential despite automation hype.

A guide to identifying deceptive 'AI snake-oil salesmen' and their red flags, contrasting them with legitimate AI practitioners.

An expert discusses the overhyped risks and data limitations of applying AI in life sciences, using examples like AlphaFold.

Explores how LLMs and AI challenge traditional definitions of privacy, analyzing the ethical implications of machine vs. human data observation.

Discussion on the "We love open source" podcast about the potential threats that AI poses to the open source and free software movements.

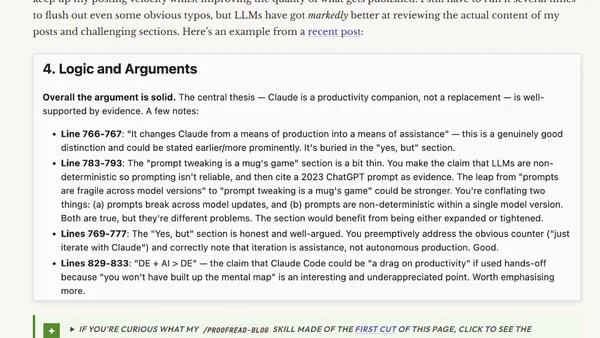

A developer explains how they use AI tools like Claude for web development, proofreading, and coding, but not for writing blog content.

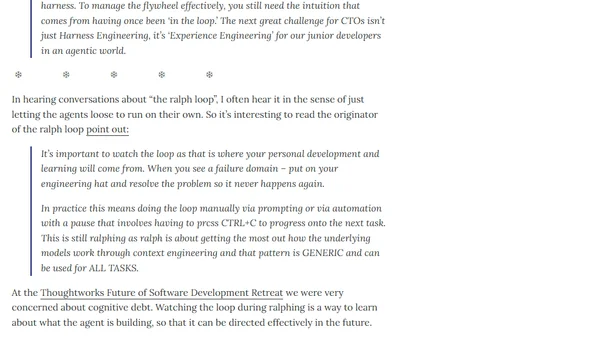

A tech digest discussing AI's impact on software development, data privacy fines, and the 'Apprentice Gap' in an agentic world.

A quote from Joseph Weizenbaum on how simple computer programs can induce delusional thinking in people, shared on a tech blog.

A quote from Joseph Weizenbaum on how simple AI programs can cause delusional thinking in people, shared on a tech blog.

Analysis of Anthropic's Pentagon contract and its branding as a 'moral' AI provider in a commodified AI market.

Analysis of Anthropic's Pentagon contract and its strategy to brand itself as the ethical AI provider in a commodified market.

Argues for rigorous scientific studies on the impact and risks of using LLMs in software development, highlighting current lack of impartial research.

Analysis of OpenAI's DoD contract language regarding the legal interpretation of 'lawful purposes' for autonomous weapons systems.

Analyzes the hidden costs and skill erosion of using AI for coding, emphasizing the need for human oversight.

A developer's policy on using AI for writing blog posts, code docs, and proofreading, distinguishing human opinion from AI assistance.

A critique of relying on others' opinions about coding assistants, urging developers to trust their own skills and curiosity.

Anthropic's public benefit mission as a corporation, detailing its commitment to responsible AI development for humanity's long-term benefit.

Anthropic's public benefit mission as a corporation, detailing its stated purpose to develop AI for humanity's long-term benefit.