Accelerating Large Language Models with Mixed-Precision Techniques

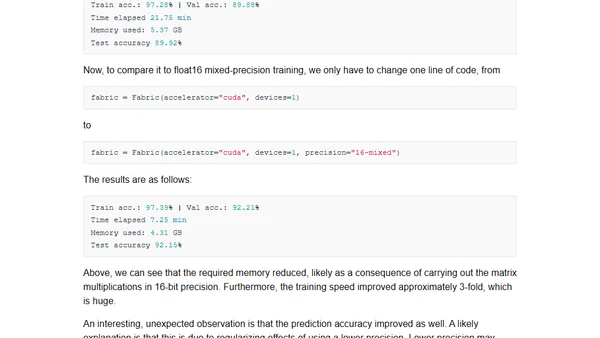

Read OriginalThis technical article details mixed-precision training for large language models (LLMs), explaining how using lower-precision formats like 16-bit floats can accelerate training speeds 2-3x and reduce memory footprint without sacrificing accuracy. It covers the fundamentals of floating-point representation, compares 32-bit and 64-bit precision, and discusses the practical benefits for deep learning on modern GPUs.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet