Accelerate Stable Diffusion inference with DeepSpeed-Inference on GPUs

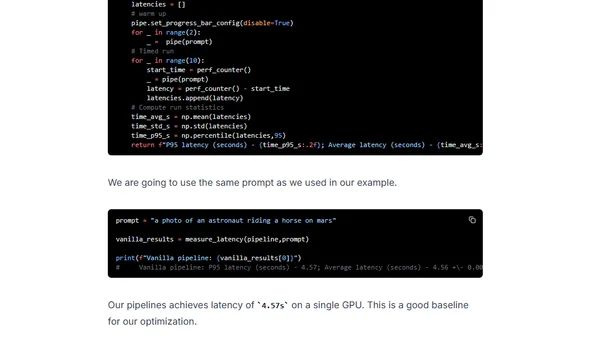

Read OriginalThis technical tutorial demonstrates how to accelerate Stable Diffusion text-to-image generation on single GPUs using DeepSpeed-Inference. It covers setting up the environment, loading the model, applying DeepSpeed's InferenceEngine, and evaluating performance gains, specifically on Ampere-generation NVIDIA GPUs like the A10G.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet