Intro to Apache Iceberg with Apache Polaris and Apache Spark

A technical guide on using Apache Iceberg with Apache Spark and Polaris for building and managing a data lakehouse, covering setup, operations, and optimization.

A technical guide on using Apache Iceberg with Apache Spark and Polaris for building and managing a data lakehouse, covering setup, operations, and optimization.

Overview of key proposals in Apache Iceberg v4, focusing on performance, metadata efficiency, and portability for modern data workloads.

A monthly roundup of 78 curated links on data engineering, architecture, AI, and tech trends, with top picks highlighted.

A monthly roundup of curated links and articles focused on data engineering, Apache Kafka, and data platform technologies.

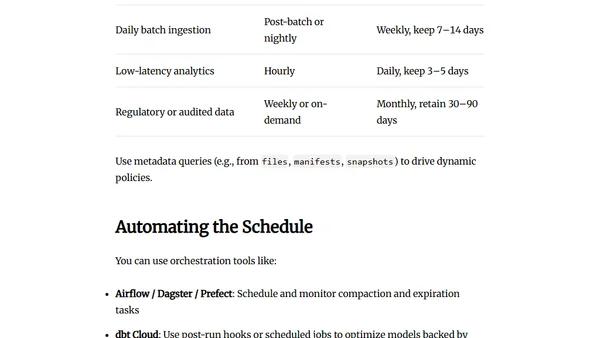

A guide to scheduling compaction and snapshot expiration in Apache Iceberg tables based on workload patterns and infrastructure constraints.

A monthly roundup of data engineering links covering Apache Iceberg, Kafka, Debezium, Spark, and lakehouse architecture.

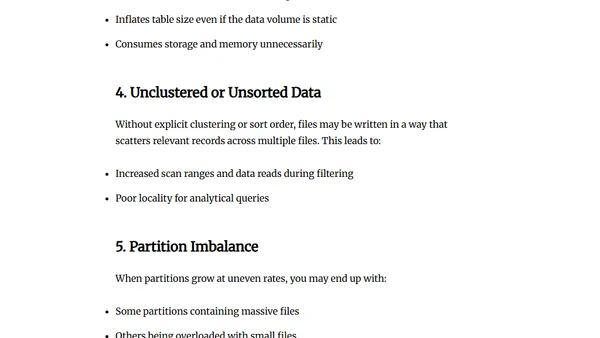

Explains how Apache Iceberg tables degrade without optimization, covering small files, fragmented manifests, and performance impacts.

Explains the importance of table maintenance in Apache Iceberg for data lakehouses, covering metadata and file management.

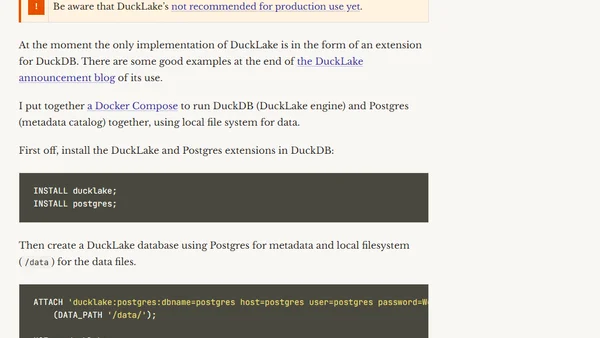

An analysis of DuckLake, a new open table format and catalog specification for data engineering, comparing it to existing solutions like Iceberg and Delta Lake.

A monthly roundup of curated links and articles covering data engineering, Kafka, stream processing, and AI, with top picks highlighted.

Explains the importance of data storage formats and compression for performance and cost in large-scale data engineering systems.

Explains the data lakehouse architecture, a unified approach combining data lake scalability with warehouse management features like ACID transactions.

Explores the modern data stack, cloud platforms, and principles for building flexible, cloud-native data engineering architectures.

Explores how DevOps principles like CI/CD, infrastructure as code, and monitoring are applied to data engineering for reliable, scalable data pipelines.

Explores core principles of scalable data engineering, including parallelism, minimizing data movement, and designing adaptable pipelines for growing data volumes.

Explores workflow orchestration in data engineering, covering DAGs, tools, and best practices for managing complex data pipelines.

Explains core data engineering concepts, comparing ETL and ELT data pipeline strategies and their use cases.

Explains streaming data fundamentals, how streaming systems work, their use cases, and challenges compared to batch processing.

Explains batch processing fundamentals for data engineering, covering concepts, tools, and its ongoing relevance in data workflows.

Explains core data engineering concepts: metadata, data lineage, and governance, and their importance for scalable, compliant data systems.