Keep the Robots Out of the Gym

A developer argues for using AI as a 'tutor' for critical thinking tasks, not just a tool to do the work, to maintain and improve core cognitive skills.

A developer argues for using AI as a 'tutor' for critical thinking tasks, not just a tool to do the work, to maintain and improve core cognitive skills.

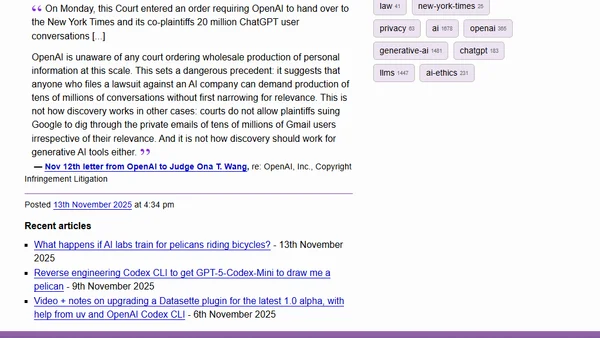

OpenAI objects to court order demanding 20M ChatGPT user conversations, citing dangerous precedent for AI discovery.

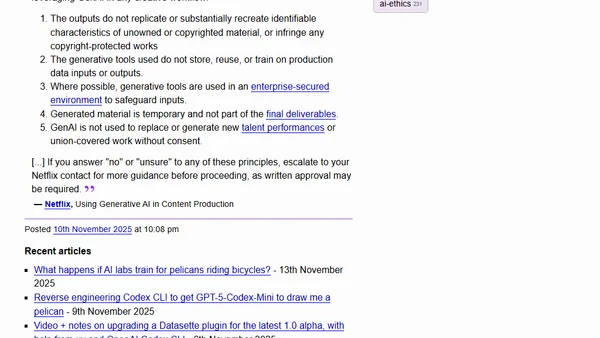

Netflix's guidelines for using generative AI in content production, focusing on copyright, data security, and talent rights.

A weekly collection of articles on software architecture, AI challenges, API testing, and team decision-making frameworks.

A developer reflects on the shift from classic programming debates to pervasive AI discussions, exploring its practical use, ethical concerns, and impact on the developer community.

Critiques the anti-AI movement's purely negative stance, arguing it undermines credibility and suggests more constructive criticism.

Discusses the trend of websites walling off content from AI bots, arguing it undermines open internet principles and may concentrate power.

A critical analysis of AI executives' public statements about AI's impact on jobs, questioning their detachment and the hype surrounding job displacement.

Explores the limitations of using large language models as substitutes for human opinion polling, highlighting issues of representation and demographic weighting.

Argues that we unfairly criticize AI for being non-deterministic, inconsistent, or error-prone, while accepting the same flaws in human reasoning and output.

Author discusses their blog being banned from Lobste.rs for using AI agents to assist in writing, sparking a debate on AI's role in content creation.

Explores how AI-generated content challenges traditional work review heuristics and the need for new evaluation methods.

A developer's personal crisis about the impact of Generative AI on software engineering careers, ethics, and the future of the industry.

Analyzing the Builder.ai controversy, debunking claims of faking AI with human engineers, and exploring the technical challenges of such a deception.

A software engineer critiques the 'democratization' of AI in development, arguing it oversimplifies and risks creating fragile software without CS fundamentals.

An article discussing the risks of over-relying on AI for creative work and how it can damage professional credibility and trust.

Explores the ethics of LLM training data and proposes a technical method to poison AI crawlers using nofollow links.

![The day piracy changed [blog]](https://alldevblogs.blob.core.windows.net/thumbs/article-5db2ab17df73-full-25f3e6da.webp)

The author argues that traditional piracy is dead, redefined by corporations like Meta using scraped, pirated content to train AI models without consequence.

Explores ethical boundaries and risks of AI, advising where human judgment should prevail over automation.

Data professionals discuss AI anxiety, job security, and the future of tech careers at Data Day Texas 2025.