Interfaces for Explaining Transformer Language Models

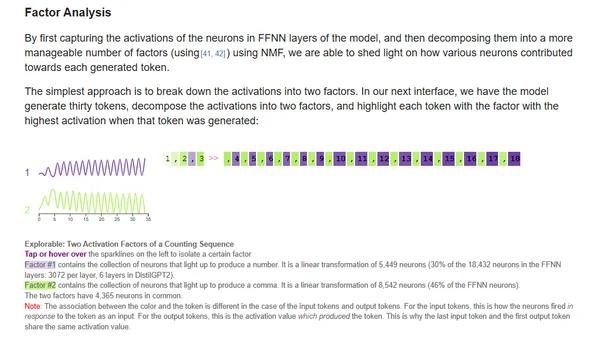

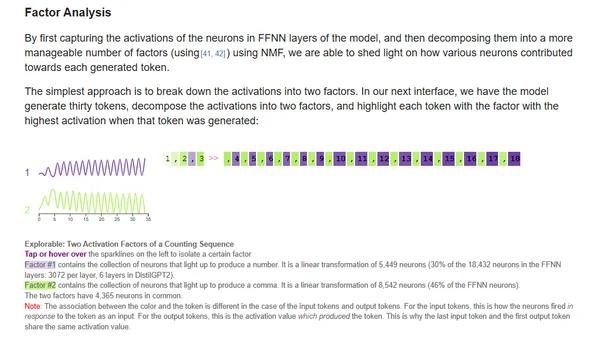

Explores interactive methods for interpreting transformer language models, focusing on input saliency and neuron activation analysis.

Explores interactive methods for interpreting transformer language models, focusing on input saliency and neuron activation analysis.

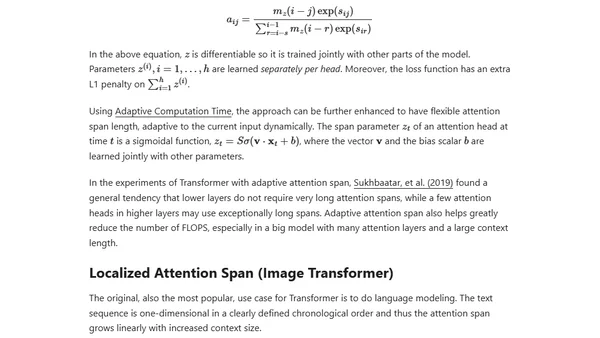

An updated overview of the Transformer model family, covering improvements for longer attention spans, efficiency, and new architectures since 2020.

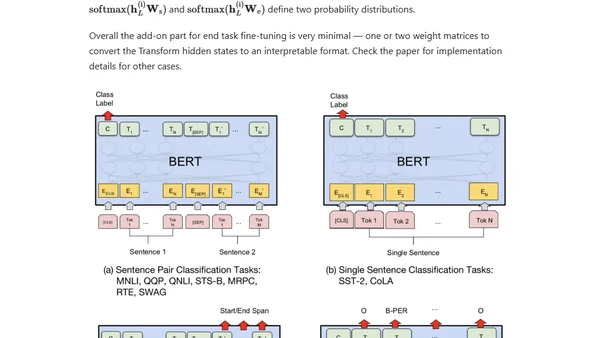

A technical overview of the evolution of large-scale pre-trained language models like BERT, GPT, and T5, focusing on contextual embeddings and transfer learning in NLP.

Explains the attention mechanism in deep learning, its motivation from human perception, and its role in improving seq2seq models like Transformers.

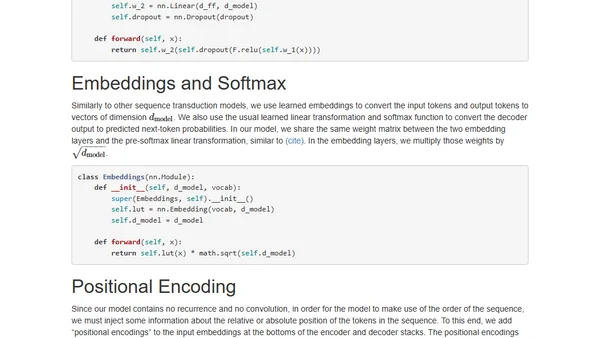

An annotated, line-by-line implementation of the Transformer architecture from 'Attention is All You Need' in PyTorch.