An unexpected detour into partially symbolic, sparsity-expoiting autodiff; or Lord won’t you buy me a Laplace approximation

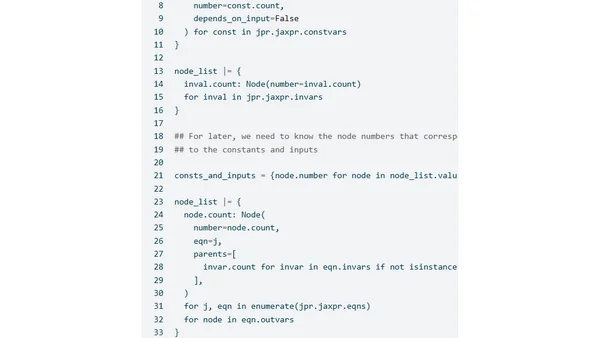

Read OriginalThis article details a deep dive into implementing Laplace approximations, a method for approximating distributions, using the JAX library. It covers the mathematical theory, then focuses on practical implementation challenges involving sparse automatic differentiation and manipulating JAX's intermediate representation (jaxpr) to optimize gradient computation for a logistic regression example, aiming for performance close to a hand-coded gradient.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet