Creating Confidence Intervals for Machine Learning Classifiers

A guide to creating confidence intervals for evaluating machine learning models, covering multiple methods to quantify performance uncertainty.

A guide to creating confidence intervals for evaluating machine learning models, covering multiple methods to quantify performance uncertainty.

A technical guide explaining methods for creating confidence intervals to measure uncertainty in machine learning model performance.

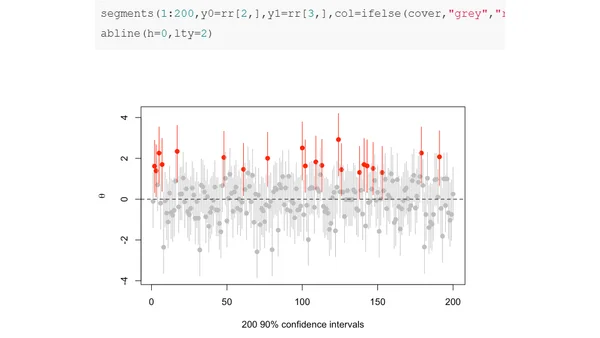

A statistical analysis discussing the limitations of confidence intervals, using examples from small-area sampling to illustrate their weak properties.

Explains Chebyshev's inequality, a probability bound, and its application to calculating Upper Confidence Limits (UCL) in environmental monitoring.

Explores the surprising effectiveness and conservative nature of the Bonferroni correction for multiple hypothesis testing, even with many tests.

Explores the critical difference between frequentist confidence intervals and Bayesian credible regions, arguing why frequentism often fails scientific inquiry.