Data Virtualization and the Semantic Layer: Query Without Copying

Explains how data virtualization and a semantic layer enable querying distributed data without copying, reducing costs and improving freshness.

Alex Merced — Developer and technical writer sharing in-depth insights on data engineering, Apache Iceberg, data lakehouse architectures, Python tooling, and modern analytics platforms, with a strong focus on practical, hands-on learning.

425 articles from this blog

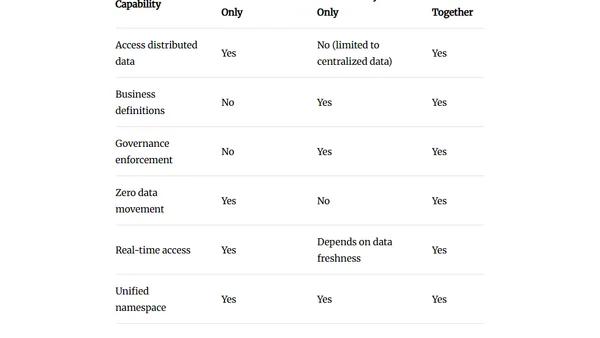

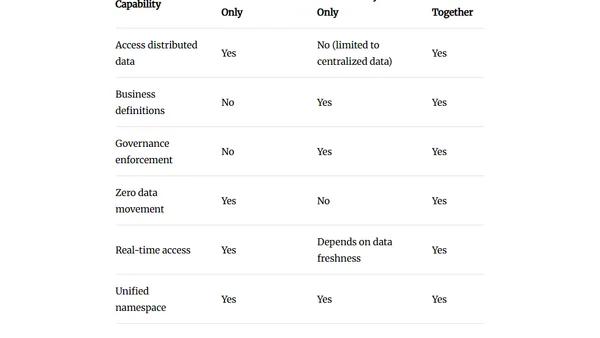

Explains how data virtualization and a semantic layer enable querying distributed data without copying, reducing costs and improving freshness.

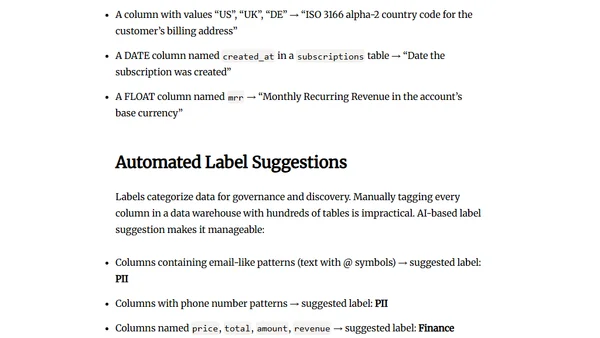

Explains how a self-documenting semantic layer uses AI to automate data documentation, reducing manual work and governance risks for data teams.

Explains why AI data analytics fail without a semantic layer to define business metrics and ensure accurate, secure queries.

A guide to choosing between batch and streaming data processing models based on actual freshness requirements and cost.

A practical, tool-agnostic checklist of essential best practices for designing, building, and maintaining reliable data engineering pipelines.

Explains why transactional data models are inefficient for analytics and how to design denormalized, query-optimized models for better performance.

Explores how data modeling principles adapt for modern lakehouse architectures using open formats like Apache Iceberg and the Medallion pattern.

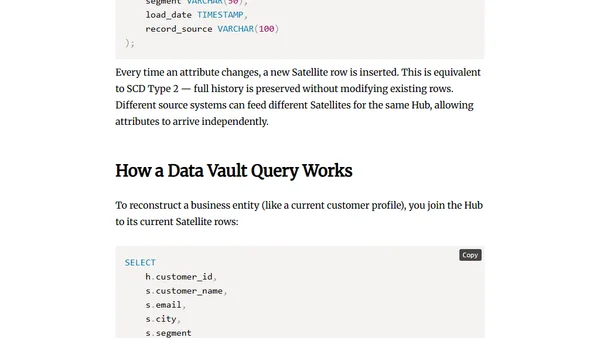

Explains Data Vault data modeling, its core components (Hubs, Links, Satellites), and the problems it solves for complex, evolving data sources.

Compares Star Schema and Snowflake Schema data models, explaining their structures, trade-offs, and when to use each for optimal data warehousing.

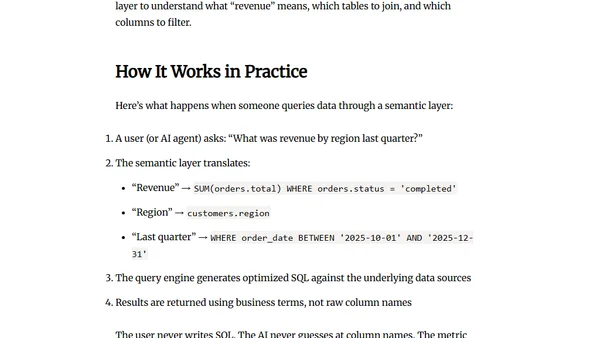

Explains what a semantic layer is, its components, and how it provides consistent business definitions for data queries and AI agents.

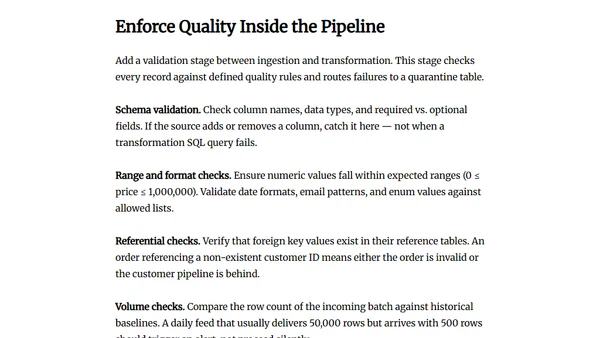

Argues that data quality must be enforced at the pipeline's ingestion point, not patched in dashboards, to ensure consistent, reliable data.

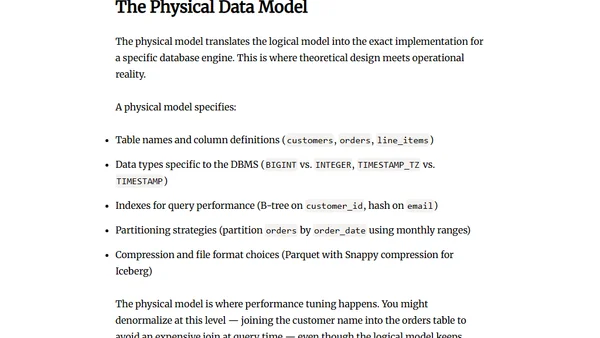

Explains the three levels of data modeling (conceptual, logical, physical) and their importance in database design.

A comprehensive guide exploring the taxonomy, tools, and best practices for using AI-assisted coding tools in modern software development.

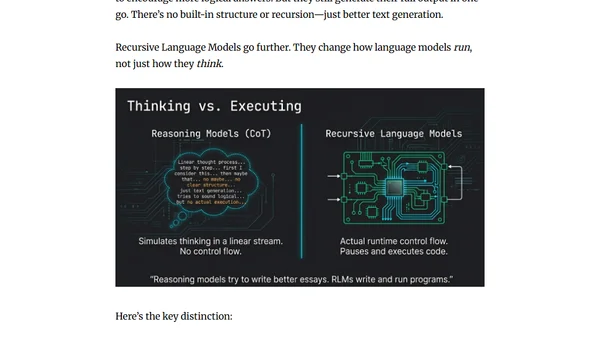

Explains Recursive Language Models (RLMs), which are LLMs that call themselves to break complex tasks into structured, reusable steps.

A 2025 year-in-review of key Apache data projects: Iceberg, Polaris, Parquet, and Arrow, detailing their major updates and future roadmap.

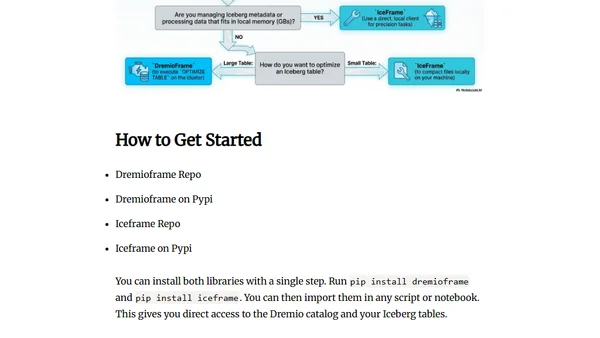

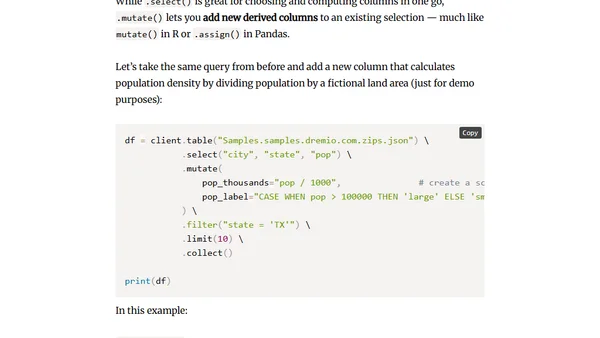

Introduces DremioFrame and IceFrame, two new Python libraries for simplifying work with Dremio and Apache Iceberg tables.

Introduces dremioframe, a Python DataFrame library for querying Dremio with a pandas-like API, generating SQL under the hood.

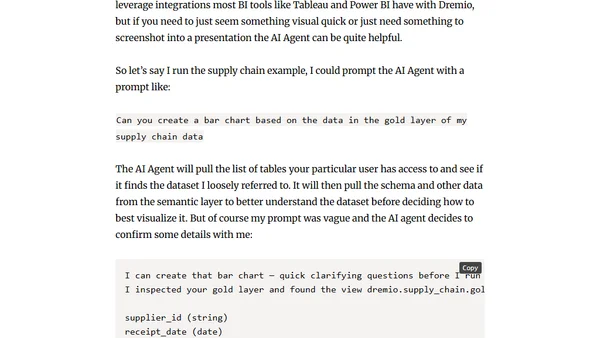

A hands-on tutorial exploring Dremio Cloud Next Gen's new free trial, covering its lakehouse platform, AI features, and SQL capabilities.

A comprehensive guide to learning Apache Iceberg, data lakehouse architecture, and Agentic AI with curated tutorials, tools, and resources.

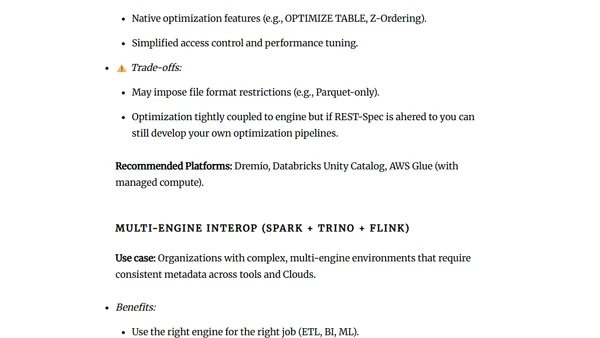

Explores the commercial Apache Iceberg catalog ecosystem, focusing on REST Catalog standards, optimization strategies, and architectural trade-offs.