When to use Apache Xtable or Delta Lake Uniform for Data Lakehouse Interoperability

Explores solutions like Apache XTable and Delta Lake Uniform for enabling interoperability between different data lakehouse table formats.

Alex Merced — Developer and technical writer sharing in-depth insights on data engineering, Apache Iceberg, data lakehouse architectures, Python tooling, and modern analytics platforms, with a strong focus on practical, hands-on learning.

418 articles from this blog

Explores solutions like Apache XTable and Delta Lake Uniform for enabling interoperability between different data lakehouse table formats.

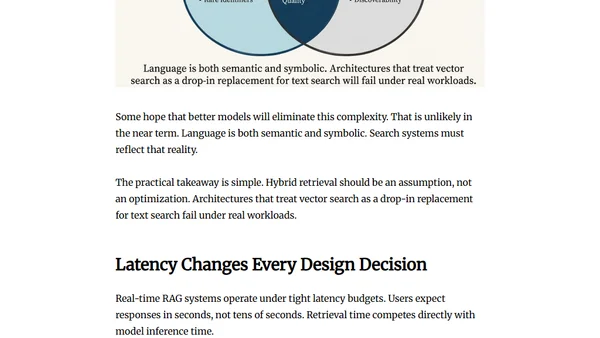

Argues that RAG system failures stem from data engineering issues like fragmented data and governance, not from model or vector database choices.

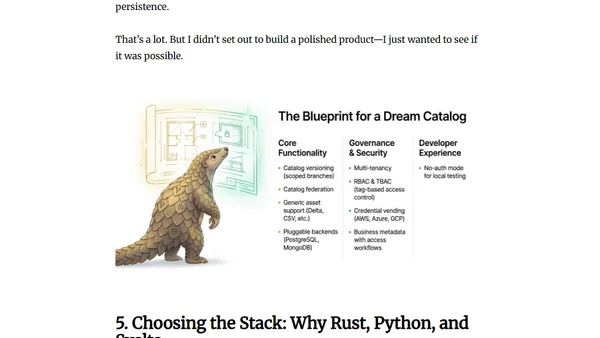

A developer shares the story of building Pangolin, an open-source lakehouse catalog, using an AI coding agent during a holiday break.

A technical guide on designing and implementing a modern data lakehouse architecture using the Apache Iceberg table format in 2025.

A look at 10 upcoming features and enhancements for the Apache Iceberg data lakehouse table format, expected in 2025.

A guide to setting up and using Dremio's Auto-Ingest feature for automated, event-driven data loading into Apache Iceberg tables from cloud storage.

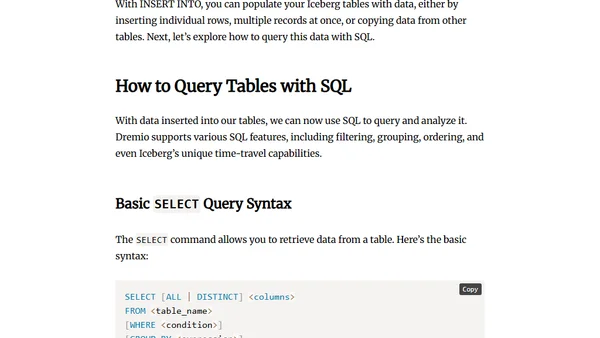

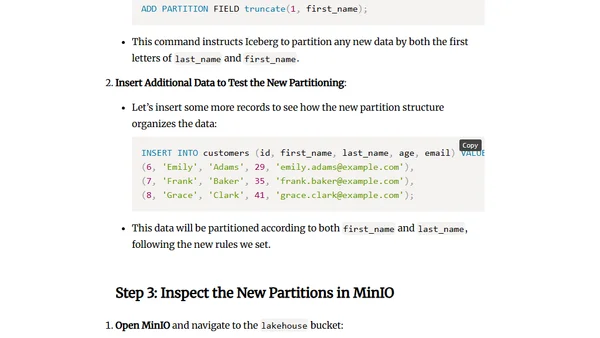

A tutorial on using SQL with Apache Iceberg tables in the Dremio data lakehouse platform, covering setup and core operations.

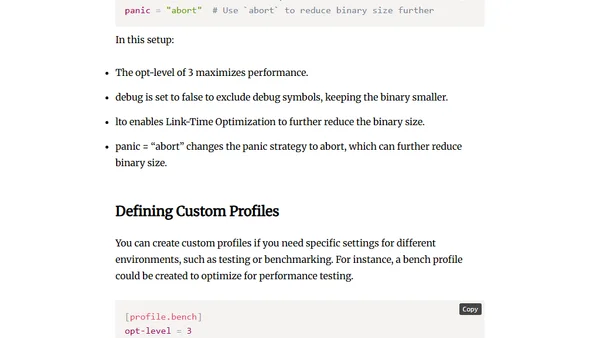

A guide to understanding and using the cargo.toml file, the central configuration file for managing Rust projects and dependencies with Cargo.

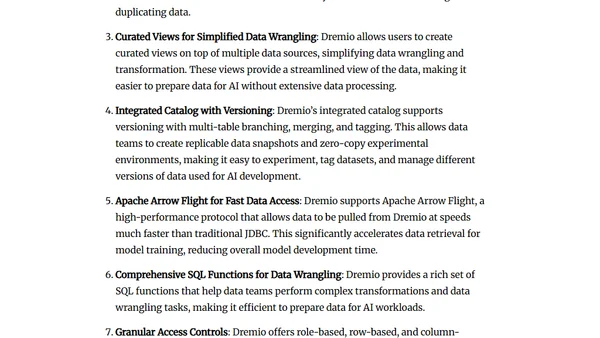

Explores how Dremio and Apache Iceberg create AI-ready data by ensuring accessibility, scalability, and governance for machine learning workloads.

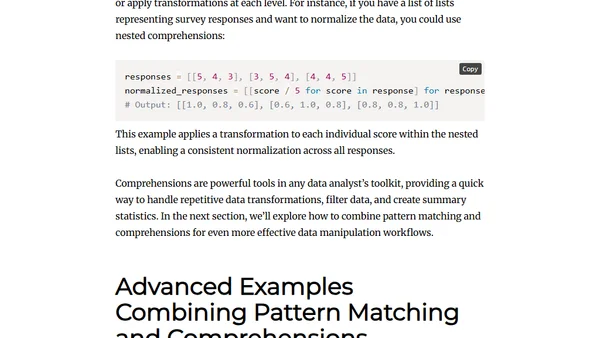

Explores using Python's pattern matching and comprehensions for efficient data cleaning, transformation, and analysis.

A hands-on tutorial for setting up a local data lakehouse with Apache Iceberg, Dremio, and Nessie using Docker in under 10 minutes.

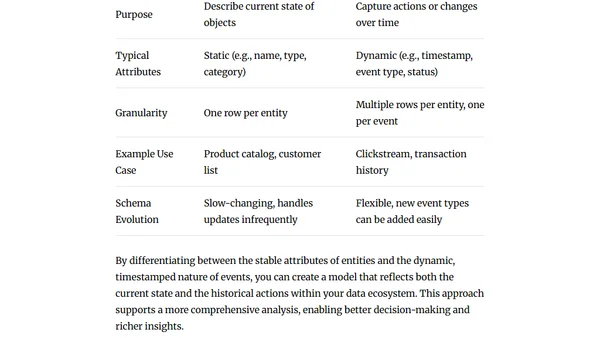

Explores the differences between event and entity data modeling, when to use each approach, and practical design considerations for structuring data effectively.

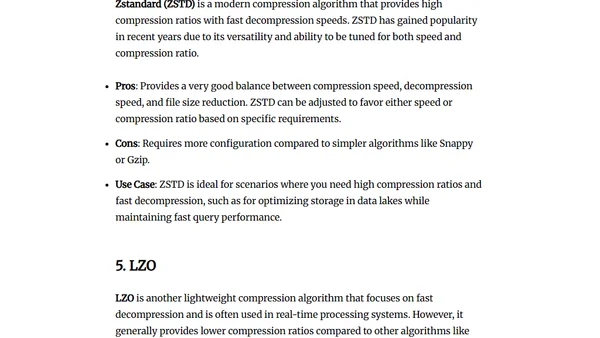

Explores compression algorithms in Parquet files, comparing Snappy, Gzip, Brotli, Zstandard, and LZO for storage and performance.

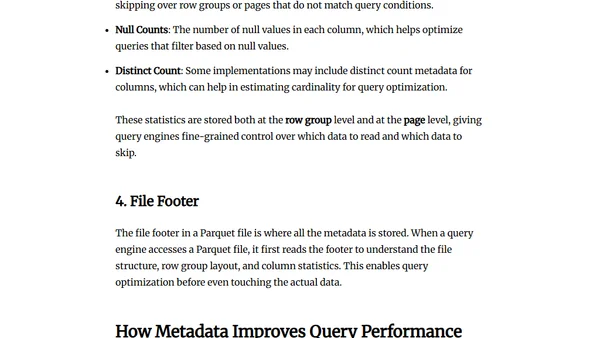

Explores how metadata in Parquet files improves data efficiency and query performance, covering file, row group, and column-level metadata.

Explains encoding techniques in Parquet files, including dictionary, RLE, bit-packing, and delta encoding, to optimize storage and performance.

Explores why Parquet is the ideal columnar file format for optimizing storage and query performance in modern data lake and lakehouse architectures.

Final guide in a series covering performance tuning and best practices for optimizing Apache Parquet files in big data workflows.

A practical guide to reading and writing Parquet files in Python using PyArrow and FastParquet libraries.

Explains the hierarchical structure of Parquet files, detailing how pages, row groups, and columns optimize storage and query performance.

An introduction to Apache Parquet, a columnar storage file format for efficient data processing and analytics.