How to Use Hugging Face Models with Ollama

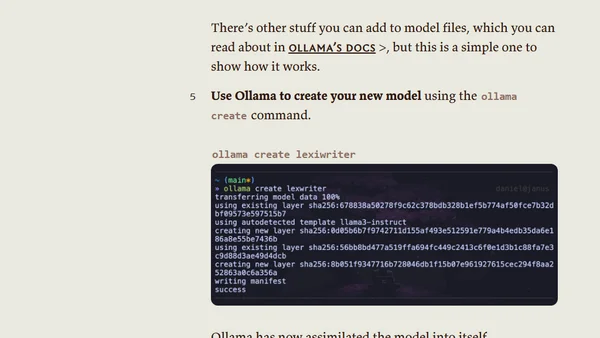

Read OriginalThis article provides a step-by-step technical guide for downloading a GGUF model file from Hugging Face, creating a Modelfile, and using Ollama's CLI to run the custom model locally. It bridges the gap between Ollama's simplicity and Hugging Face's extensive model selection, specifically demonstrating the process with a Llama 3.1 model for creative writing tasks.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet