🎙️🤖 Real-Time AI Conversations in .NET — Local STT, TTS, VAD and LLM

A guide to building a real-time voice conversation app in .NET using local AI for speech-to-text, text-to-speech, and LLM integration.

A guide to building a real-time voice conversation app in .NET using local AI for speech-to-text, text-to-speech, and LLM integration.

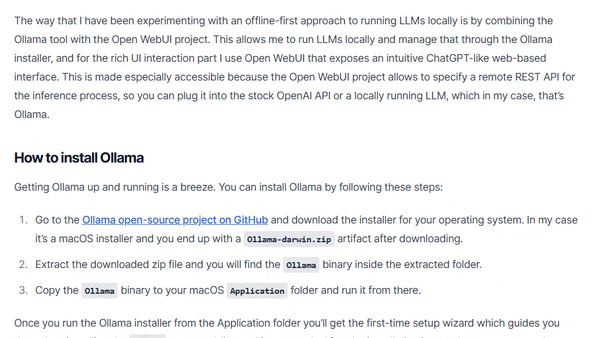

A guide on running Large Language Models (LLMs) locally for inference, covering tools like Ollama and Open WebUI for privacy and cost control.

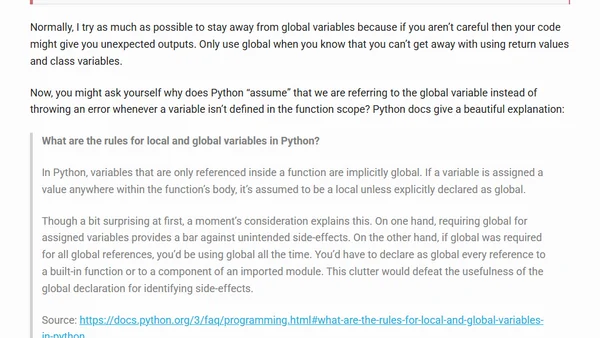

Explains Python variable scopes with code examples, focusing on common errors when using local and global variables.