Evaluate LLMs and RAG a practical example using Langchain and Hugging Face

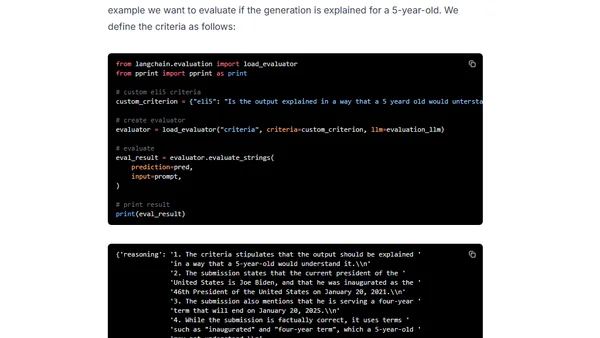

Read OriginalThis technical tutorial explores practical methods for evaluating Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) systems. It demonstrates a hands-on example using Langchain and the Hugging Face Inference API to assess models like Llama-2 on criteria such as helpfulness and relevance, and discusses using LLMs like GPT-4 as automated judges for evaluation.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet