Deploy Llama 3 70B on AWS Inferentia2 with Hugging Face Optimum

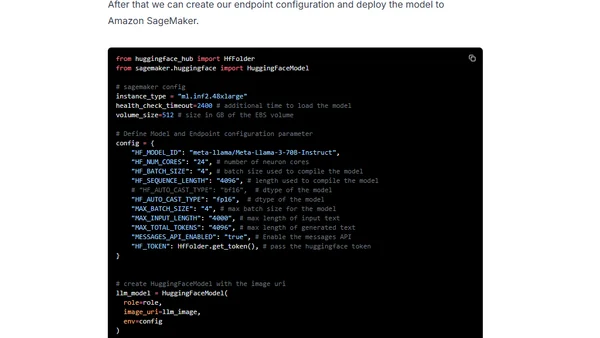

Read OriginalThis tutorial details the process of deploying the large language model Meta-Llama-3-70B-Instruct on AWS Inferentia2 infrastructure. It covers setting up the environment with SageMaker, using the Hugging Face LLM Inf2 container, deploying the model, running inference, and benchmarking performance with llmperf.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet