Building an Ollama-Powered GitHub Copilot Extension

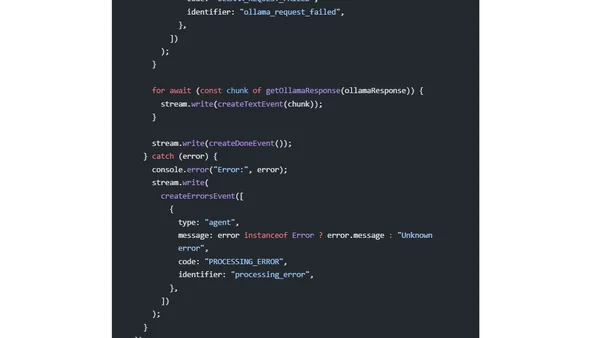

Read OriginalThis technical article details the development of a GitHub Copilot extension that integrates Ollama to run the CodeLlama model locally. It explains the benefits of local AI processing, outlines the extension's structure built with Hono.js, and provides code snippets for configuration and handling requests, demonstrating how to bring private, low-latency AI coding assistance into the development workflow.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet