Notes on Causally Correct Partial Models

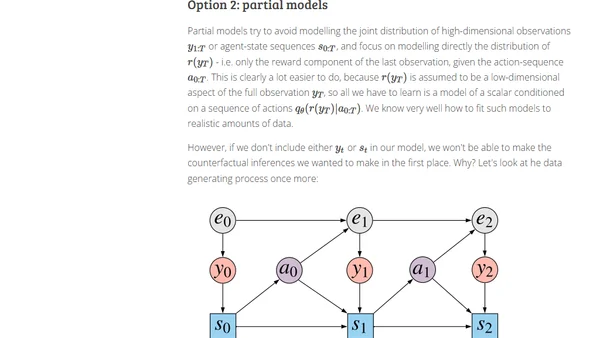

Read OriginalThis article provides a detailed breakdown of the paper 'Causally Correct Partial Models for Reinforcement Learning' by Rezende et al. (2020). It explains the Partially Observed Markov Decision Process (POMDP) setup, the agent's goal of maximizing reward, and the core challenge of estimating the expected performance of a new policy through causal/counterfactual queries, contrasting model-based and model-free approaches like importance sampling.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet