Creating a Local Data Lakehouse using Spark/Minio/Dremio/Nessie

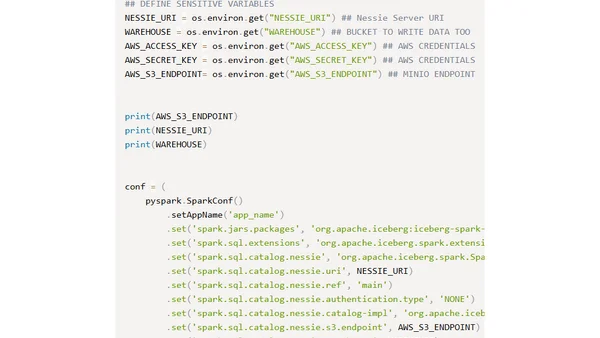

Read OriginalThis technical guide explains how to create a local Data Lakehouse, a hybrid of data lakes and warehouses. It provides a step-by-step tutorial using Docker Compose to orchestrate Apache Spark for data ingestion, Minio for S3-compatible storage, Apache Iceberg as the table format, Nessie for data cataloging, and Dremio as the query engine for analytics.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet