A Journey from AI to LLMs and MCP - 2 - How LLMs Work — Embeddings, Vectors, and Context Windows

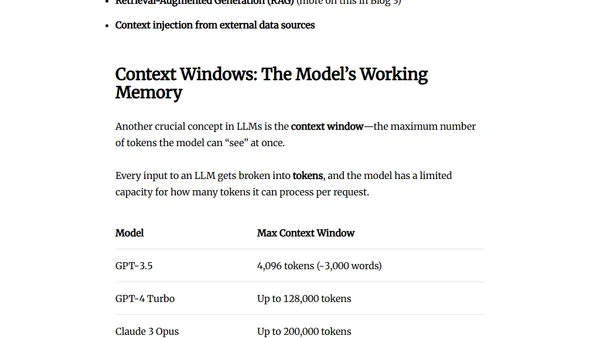

Read OriginalThis technical article delves into the inner workings of Large Language Models (LLMs). It explains core concepts like embeddings, which convert words into numerical vectors, and how these vectors enable semantic understanding and search. The article also covers the role and limitations of context windows, providing a foundational look at the mathematics behind LLM operations.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet