To Chunk or Not to Chunk With the Long Context Single Embedding Models

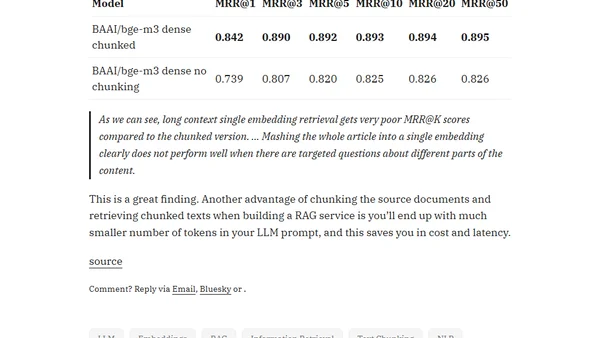

Read OriginalThis technical article analyzes the effectiveness of long-context embedding models for document retrieval. It details an experiment comparing chunked and non-chunked document embedding using the BGE-M3 model, finding that chunking significantly improves retrieval accuracy (MRR scores) and reduces LLM prompt costs and latency in RAG systems.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet