Lessons After a Half Billion Gpt Tokens

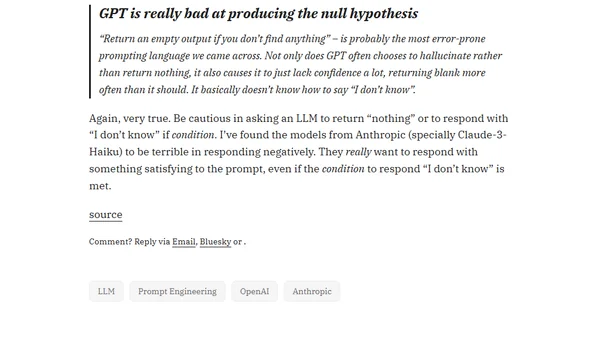

Read OriginalThe article shares insights from building features with over half a billion GPT tokens, emphasizing that shorter, less prescriptive prompts often yield better results than over-specified ones. It highlights a key challenge: LLMs struggle to reliably return null or 'I don't know' responses, often hallucinating instead. The author compares experiences with models like GPT-4, GPT-3.5, and Claude variants.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet