BERT Text Classification in a different language

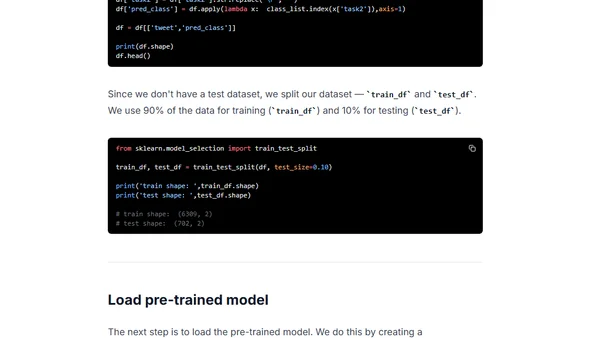

Read OriginalThis technical tutorial explains how to build a monolingual, non-English multi-class text classification model using BERT. It covers the selection of a pre-trained model, using the Simple Transformers library, and fine-tuning on the Germeval 2019 dataset of German tweets to classify abusive language. The guide includes steps for installation, training, evaluation, and making predictions.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet