MicroGrad.jl: Part 4 Extensions

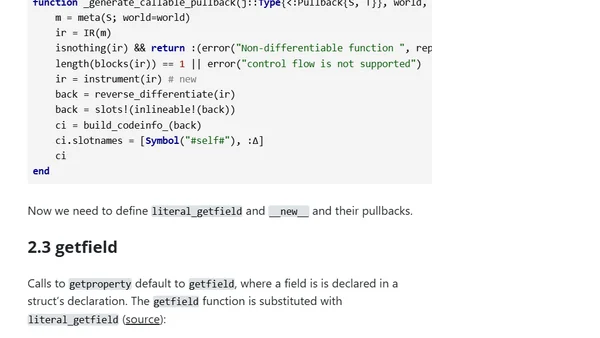

Read OriginalThis technical article, part 4 of a series on automatic differentiation in Julia, details extending the MicroGrad.jl library. It explains how to implement pullbacks for core functions like `map`, `getproperty`, and anonymous functions—which lack formal mathematical derivatives—to create a generic gradient descent optimizer. The tutorial demonstrates this by fitting a polynomial model, addressing challenges not covered by standard ChainRules.jl.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet