Gaussian distributed weights for LLMs

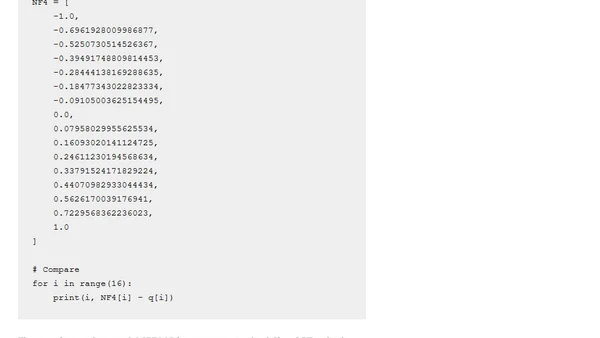

Read OriginalThis article discusses NF4 and FP4 4-bit floating point formats used for quantizing large language model (LLM) weights, particularly in bitsandbytes and Hugging Face models. It explains why NF4 uses Gaussian-distributed values to better match the distribution of LLM parameters, unlike FP4 which uses evenly spaced values. The author critiques the QLoRA paper's description of NF4, highlighting ambiguities in the definition of quantile-based indexing and issues with representing zero. The article also mentions attempts to reproduce NF4 values from the paper's appendix, noting discrepancies.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet