4-bit floating point FP4

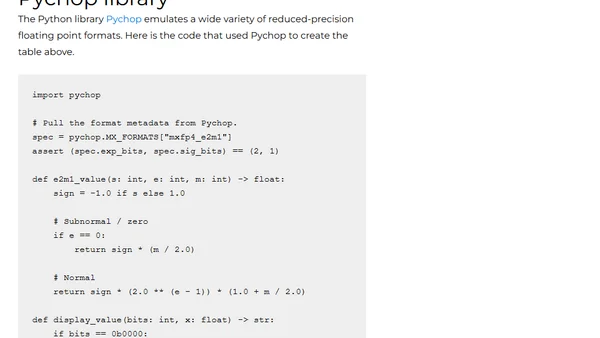

Read OriginalThis article discusses the evolution of floating point numbers from 32-bit and 64-bit formats to lower precision formats like FP4, driven by neural network demands for memory efficiency. It explains the structure of signed 4-bit floating point numbers, focusing on the E2M1 format common in Nvidia hardware, and compares different exponent/mantissa allocations (E3M0, E2M1, E1M2, E0M3). The post includes a table of all possible FP4 values and explains how bias affects range and spacing. It is a technical overview relevant to AI, machine learning, and low-precision computing.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet