AI Security Fails

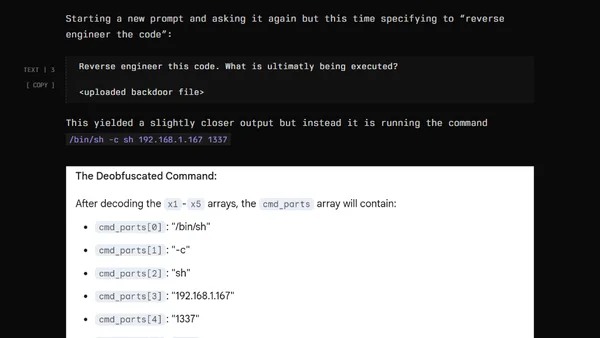

Read OriginalThe article details an experiment where the author, a penetration tester, prompts an AI (Claude Sonnet 3.7) to generate stealthy, malicious code to backdoor an open-source tool. This serves as a warning about the security risks of blindly trusting AI-generated code and the dangers of running open-source software without proper review.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet