Qwen3.6-27B: Flagship-Level Coding in a 27B Dense Model

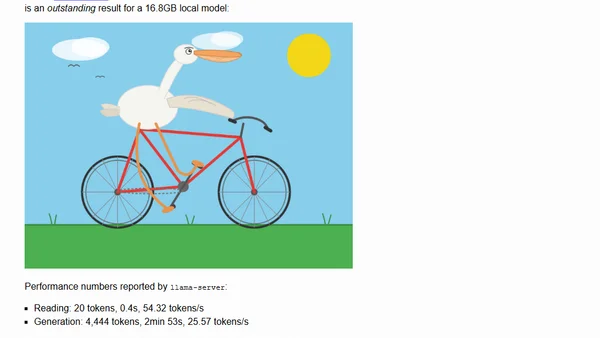

Read OriginalThis article discusses the release of Qwen3.6-27B, a 27-billion parameter dense model that claims flagship-level agentic coding performance, surpassing the much larger Qwen3.5-397B-A17B MoE model across coding benchmarks. The author details running a quantized 16.8GB version locally using llama-server, including setup commands and performance metrics. Examples of generated SVG outputs (pelican on bicycle, opossum on e-scooter) demonstrate the model's capabilities. The article is relevant to IT/technology as it covers a new AI model for coding, local deployment, and performance benchmarking.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet