How to fine-tune open LLMs in 2025 with Hugging Face

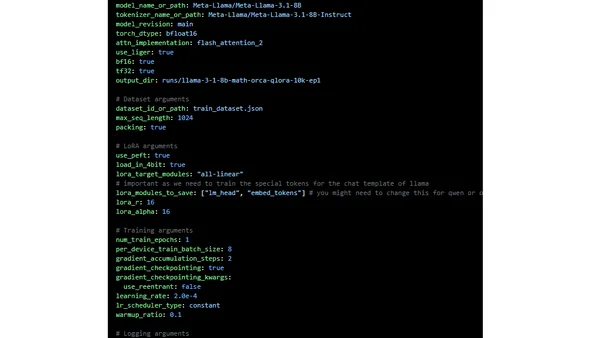

Read OriginalThis article provides a detailed, advanced tutorial for fine-tuning open-source LLMs in 2025 using the Hugging Face ecosystem. It covers optimization techniques like QLoRA and Spectrum, distributed training with DeepSpeed, performance improvements with Flash Attention, and a workflow from dataset preparation to model evaluation, targeting developers with consumer-grade GPUs.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet