Skill Eval

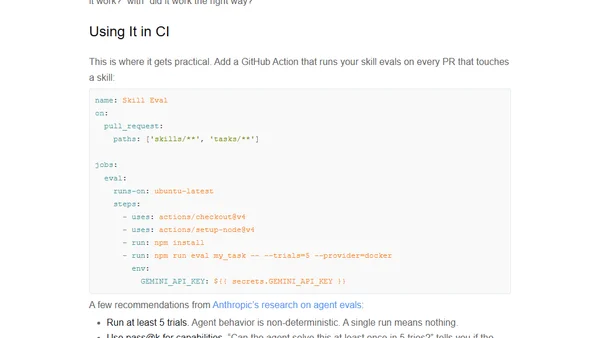

Read OriginalThe article discusses the importance of testing AI agent skills (procedural instructions for tools like Gemini and Claude) to prevent silent failures. It introduces Skill Eval, a TypeScript framework that runs agents in Docker containers to benchmark skill performance using deterministic and LLM-based graders. It also covers integrating these tests into CI/CD pipelines like GitHub Actions.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet