MicroGrad.jl: Part 5 MLP

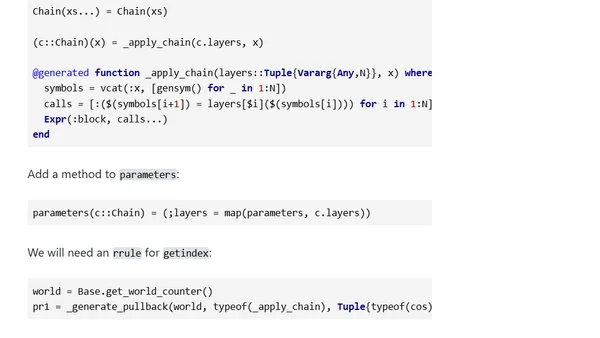

Read OriginalThis article is the fifth part of a series on implementing automatic differentiation in Julia. It demonstrates how the MicroGrad.jl package can serve as the backbone for a machine learning framework, similar to Flux.jl. The tutorial walks through creating a multi-layer perceptron (MLP), implementing layers like ReLU and Dense, and training the network on the non-linear moons dataset for classification.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet