Control UIs using wireless earbuds and on-face interactions

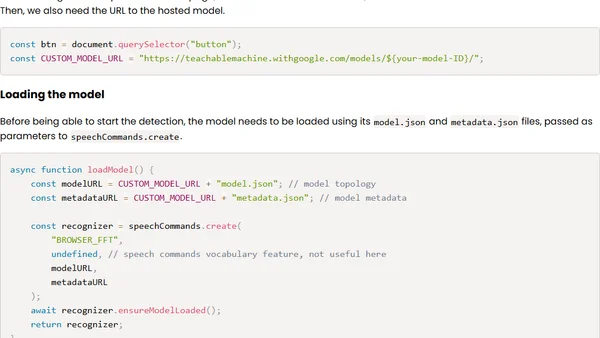

Read OriginalThis article details a personal experiment to replicate academic research on using wireless earbud microphones to capture sounds from facial touch gestures (like taps and swipes). The author uses JavaScript, TensorFlow.js, and Google's Teachable Machine to train a model that classifies these sounds and maps them to UI controls, such as scrolling a webpage.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet