Serverless BERT with HuggingFace, AWS Lambda, and Docker

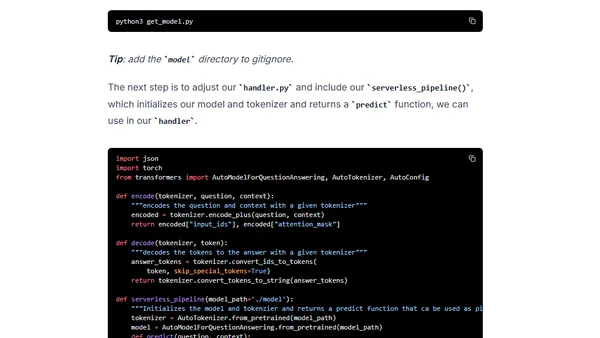

Read OriginalThis technical tutorial details how to build and deploy a serverless BERT (Bidirectional Encoder Representations from Transformers) model for question-answering. It leverages AWS Lambda's new container support, HuggingFace's Transformers library, Docker, Amazon ECR, and the Serverless Framework to create a scalable, state-of-the-art NLP API without managing servers.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet