Integrating Long-Term Memory with Gemini 2.5

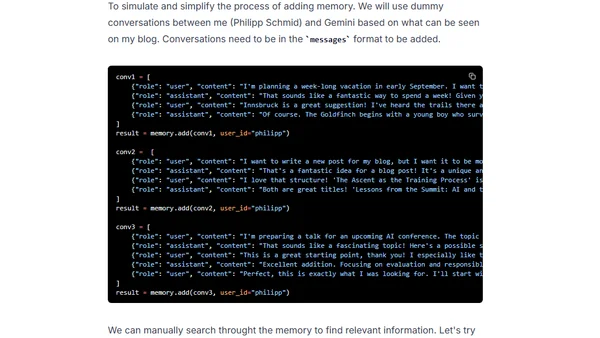

Read OriginalThis technical tutorial explains how to overcome the stateless nature of LLMs by integrating long-term memory into a Google Gemini 2.5 chatbot. It details using the Mem0 open-source library and the Gemini API to extract, store, and retrieve conversation context via vector embeddings, enabling personalized and context-aware responses across sessions.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet