How to Build a Coding Agent Benchmark with Claude's Agent SDK

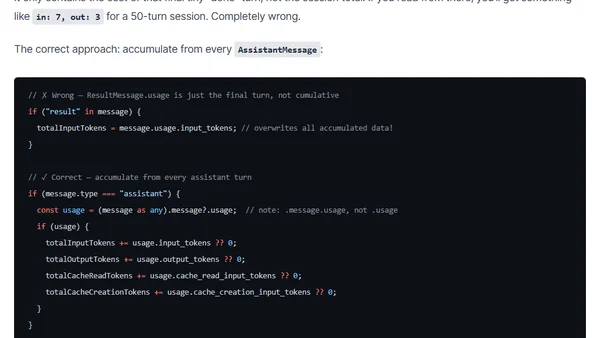

Read OriginalThis article provides a detailed walkthrough for creating a systematic benchmarking harness to evaluate AI coding agents, specifically using Claude's Agent SDK with TypeScript and Node 24. It covers architecture decisions, metric collection (quality, cost, behavior), and two eval categories: finding and fixing vulnerabilities in codebases. The framework runs tasks against different configurations, scores results objectively, and records data for comparison. Includes code examples, fixture setup with intentionally broken code, and insights on comparing models like Claude Opus vs Sonnet.

Comments

No comments yet

Be the first to share your thoughts!

Browser Extension

Get instant access to AllDevBlogs from your browser

Top of the Week

No top articles yet